Moving your AI workloads from a managed API to your own hardware is a bold move for any engineering team. You get total control over your data and eliminate the unpredictability of third-party pricing, but you trade that convenience for a massive operational headache. When you self-host a Large Language Model (LLM), you aren't just managing a piece of software; you're managing a resource-hungry beast that can crash your cluster or eat through your GPU memory in seconds if you aren't watching it. The core problem is that traditional server monitoring doesn't tell you why a model is hallucinating or why token generation suddenly slowed to a crawl. You need a specialized approach to LLMOps that blends classic site reliability with AI-specific metrics.

| Focus Area | Critical Priority | Expected Outcome |

|---|---|---|

| Monitoring | GPU Cache & Token Throughput | Prevent OOM (Out of Memory) crashes |

| SRE Strategy | Human-in-the-loop RCA | Faster incident resolution |

| Infrastructure | Kubernetes ServiceMonitors | Automated metric scraping |

The Infrastructure Layer: Monitoring vLLM and Kubernetes

If you're running models on Kubernetes, you're likely using a serving framework like vLLM. vLLM is a high-throughput serving engine that makes LLMs usable in production, but it's a black box unless you hook into its Prometheus metrics. You can't just look at CPU and RAM; you need to track the specific ways LLMs consume resources.

Start by setting up a Kubernetes ServiceMonitor to automatically scrape the following vLLM metrics. If you ignore these, you'll find your pods restarting in a crash loop without knowing why:

- vllm_num_requests_running: This tells you exactly how many requests are being processed right now. If this spikes while throughput drops, you have a bottleneck.

- vllm_num_requests_waiting: This is your queue. A growing queue means your model is under-provisioned or your batch size is too small for the incoming load.

- vllm_gpu_cache_usage_perc: This is the most dangerous metric. If your GPU cache utilization hits 100%, you're courting Out-Of-Memory (OOM) errors.

- vllm_avg_generation_throughput_toks_per_s: The real-world measure of speed. This tracks how many tokens are actually being generated per second across all users.

Beyond the hardware, you should integrate tools like Openlit or OneUptime. These aren't just for infrastructure; they help you track the actual quality of the response and the latency from the moment a user hits "Enter" to the moment the first token appears.

Why Autonomous Root Cause Analysis Isn't Ready Yet

There is a tempting idea floating around: why not use an LLM to monitor your LLM? The dream is an AI agent that sees a spike in errors, analyzes the logs, and fixes the issue while the SRE sleeps. However, real-world data shows this isn't happening yet. A 2026 study by ClickHouse tested advanced models, including GPT-5, on the task of autonomous Root Cause Analysis (RCA) using OpenTelemetry data.

The experiment involved creating synthetic anomalies-like ramping up to 1,000 users and toggling feature flags-to see if the AI could pinpoint the failure. The result? Even the most powerful models couldn't consistently beat a human SRE. They often went off track, required constant guiding questions, and struggled to connect the dots between disparate signals in a complex Kubernetes environment.

The lesson here is clear: use LLMs as assistants, not replacements. An LLM is great for summarizing a 10,000-line log file or drafting an incident report, but the actual decision of what is broken should stay with the engineer. The most effective workflow today is using an LLM to query an observability stack via an MCP server, allowing the SRE to ask, "Show me all pods that restarted in the last 10 minutes and summarize the error patterns," rather than asking the AI to "fix the system."

Evolving the SRE Role for the GenAI Era

SREs used to worry about disk space and load balancers. Now, the job description includes understanding Retrieval-Augmented Generation (RAG) pipelines and token window limits. LLMOps is not just "MLOps with a bigger model"; it's a fundamental shift in how we think about system reliability.

In a modern cloud-native setup, LLMs are starting to take on operational roles that go beyond answering user queries. We're seeing them used for:

- Log Interpretation: Instead of writing a thousand regex patterns to catch errors, an LLM can parse container events and pod logs to identify the root cause of a crash loop.

- Policy Tuning: LLMs can analyze traffic patterns and suggest better autoscaler thresholds or resource requests for your GPU nodes, reducing wasted spend.

- Smart Remediation: When a known error pattern appears (e.g., a specific CUDA driver failure), the LLM can trigger a pre-approved recovery script to restart the node.

This shift requires SREs to move from being "operators" to "orchestrators." You aren't just keeping the lights on; you're tuning the brain of your infrastructure.

The Future of AI-Native Kubernetes Automation

We are entering the era of "AI-native" infrastructure. The CNCF has been documenting a move toward integrating LLMs directly into the Kubernetes control loop. While some of these are still in the early stages, they represent the next leap in operational efficiency.

Keep an eye on these three specific capabilities:

- Autopilot Scaling: This goes beyond simple CPU-based scaling. It uses a mix of ML and LLMs to predict workload patterns and adjust replicas before the lag even hits the user.

- Smart Sizing: Instead of guessing if you need 16GB or 32GB of VRAM, Smart Sizing uses historical data to vertically scale pods, optimizing for both performance and cost.

- Pod Recovery AI: This is a specialized agent that analyzes failure events like

CrashLoopBackOff, diagnoses the specific failure, and suggests the exact configuration change needed to fix it.

For now, these should be treated as experimental. If you implement them, do it in a staging environment first. A hallucinating autopilot can take down an entire cluster faster than a human typo ever could.

Common Pitfalls to Avoid

Many teams make the mistake of treating an LLM like a traditional microservice. It isn't. Here are the most common traps:

First, neglecting the "Cold Start" problem. Loading a massive model into GPU memory takes time. If your Kubernetes probes aren't configured correctly, Kubernetes will think the pod is dead and restart it, leading to a permanent boot loop.

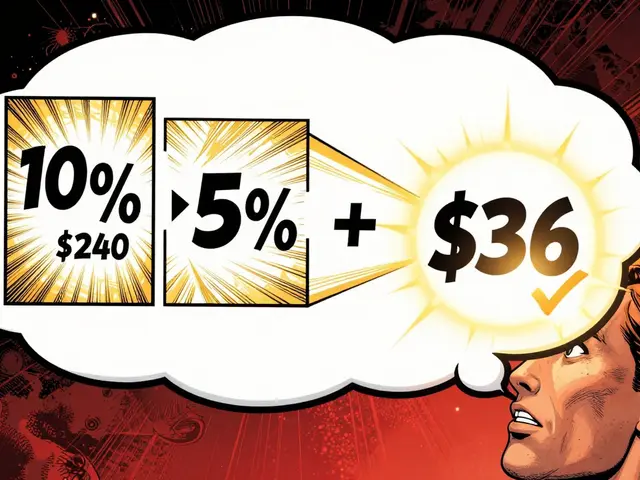

Second, ignoring token usage efficiency. If you're using an LLM to help with SRE tasks, be mindful of the context window. Sending an entire 50MB log file to an LLM is expensive and slow. Use a tool to filter the logs first, then send the relevant snippets for analysis.

Finally, trusting the AI's "fix." Never let an LLM apply a configuration change to production without a human clicking "Approve." The risks of a hallucinated flag or an incorrect resource limit are too high for full autonomy.

What is the difference between MLOps and LLMOps?

MLOps focuses on the entire lifecycle of traditional machine learning, including data labeling, feature engineering, and model training. LLMOps is a specialized subset focused on the operational challenges of large-scale models, such as prompt engineering, managing massive GPU memory caches, token-based throughput monitoring, and the complexities of RAG pipelines.

Can LLMs completely replace on-call SREs?

No. Recent evaluations, including tests by ClickHouse, show that LLMs struggle with autonomous root cause analysis in live production environments. They are best used as "copilots" that summarize data and suggest paths for investigation, but the final diagnosis and decision-making must remain with a human engineer.

Which vLLM metrics are the most critical for stability?

The most critical metrics are vllm_gpu_cache_usage_perc (to prevent OOM crashes) and vllm_num_requests_waiting (to identify queuing bottlenecks). Together with vllm_avg_generation_throughput_toks_per_s, these give you a complete picture of whether your hardware is keeping up with demand.

How should I handle log analysis for self-hosted LLMs?

Avoid sending raw logs directly to an LLM. Instead, use an observability stack (like OpenTelemetry) to filter for error patterns and anomalies. Feed these filtered snippets into the LLM to get a summary of the root cause, which saves on token costs and reduces AI hallucinations.

Is AI-native Kubernetes automation production-ready?

Features like Autopilot and Pod Recovery AI are emerging and highly promising, but they are not yet a replacement for manual oversight. They should be implemented as semi-automated tools where an SRE reviews the suggested action before it is executed in a production environment.