Ever asked an AI a math problem and got a wrong answer-even when it sounded confident? That’s because most large language models don’t think like humans. They guess. But there’s a simple trick that changes everything: chain-of-thought prompting. It doesn’t require retraining the model. It doesn’t need expensive hardware. It just asks the AI to explain its thinking step by step. And it works-dramatically.

What Is Chain-of-Thought Prompting?

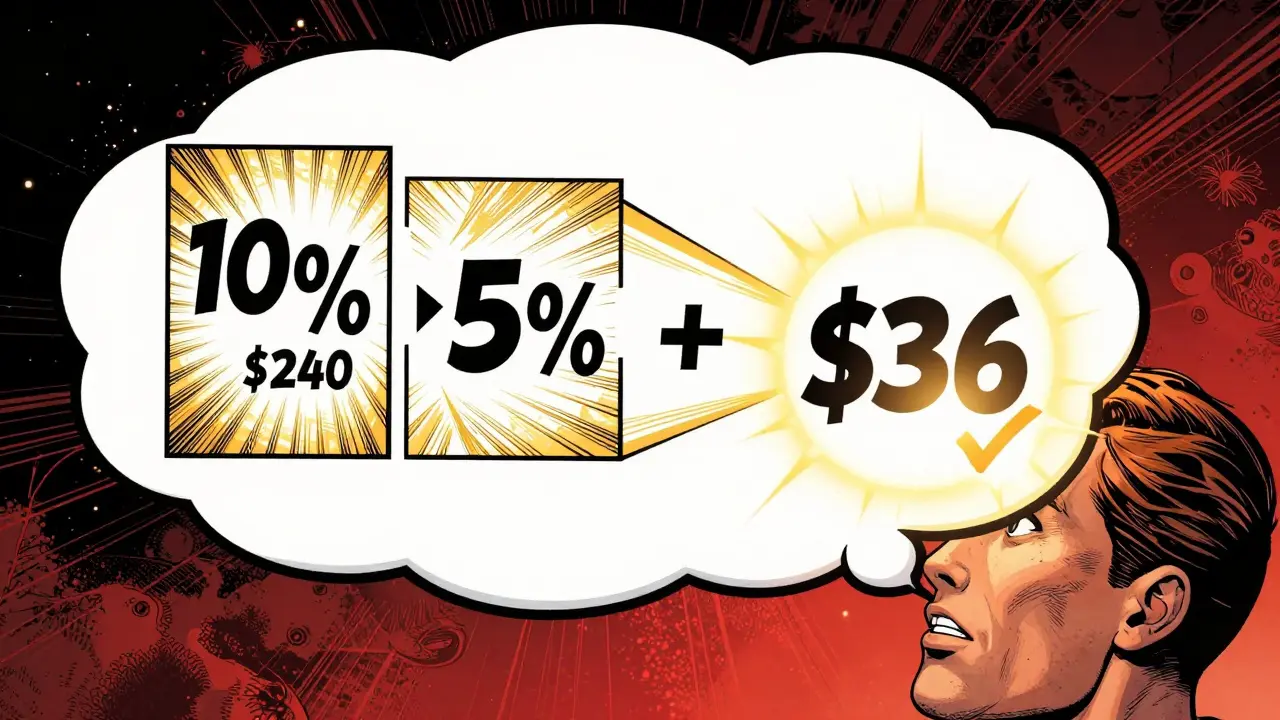

Chain-of-thought (CoT) prompting is a way to guide large language models to solve hard problems by breaking them into smaller, logical steps. Instead of just asking, "What’s 15% of $240?" you say: "Let’s think step by step. First, find 10% of $240. Then, find 5%. Add them together. What’s the result?"

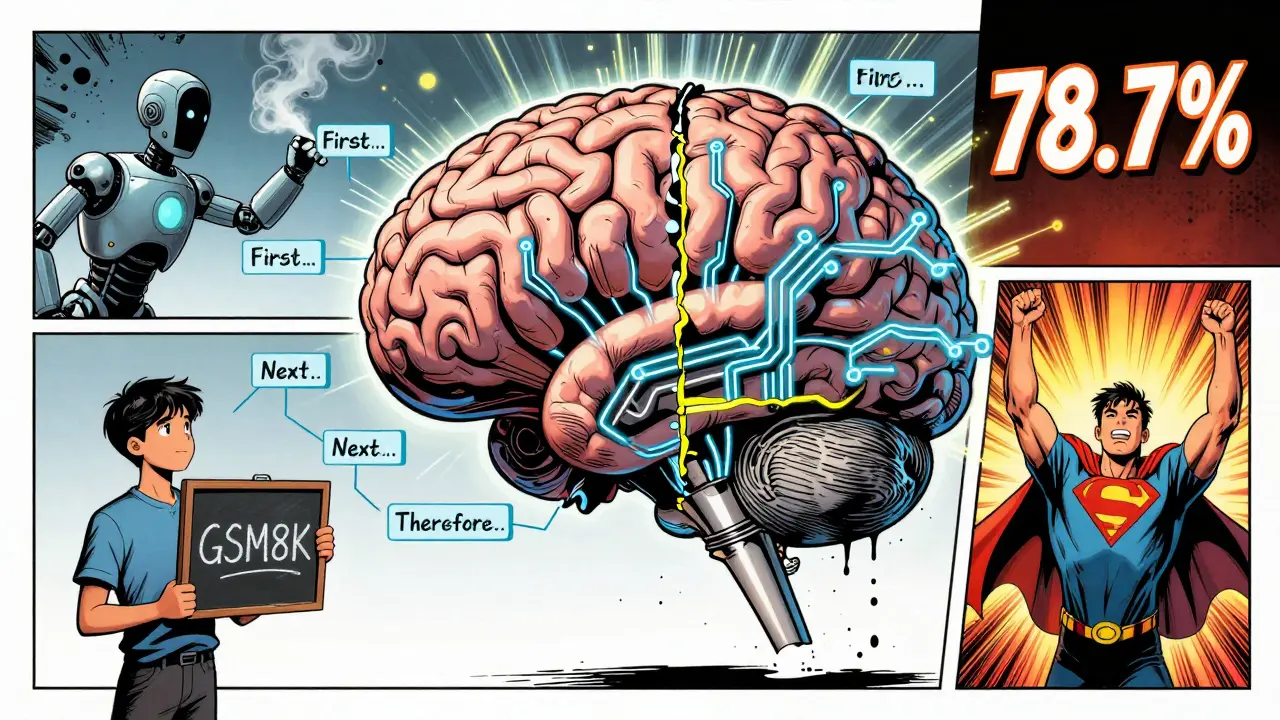

This technique was introduced in a 2022 paper by Google researchers. Before CoT, models struggled with multi-step reasoning. Even with more power, they didn’t improve much. A 118-million-parameter model scored just 3.7% on a simple arithmetic test using standard prompting. With CoT? Still only 4.4%. But when they scaled up to a 540-billion-parameter model, standard prompting hit 17.9%. CoT? 78.7%. That’s not a small jump-it’s a revolution.

The magic isn’t in the model size alone. It’s in how you ask. By forcing the AI to write out its reasoning, you give it a structure to follow. It’s like giving a student scratch paper during a test instead of letting them do everything in their head.

How It Works: The Science Behind the Steps

CoT prompting works because reasoning tasks-like math, logic, or commonsense questions-are naturally language-based. You don’t need numbers to solve them. You need words: "If John has 3 apples and gives 2 to Sarah, how many does he have left?"

The technique uses few-shot learning. You show the model 3 to 8 examples where each example includes:

- The question

- The step-by-step reasoning

- The final answer

For example:

Question: A train leaves Chicago at 2 PM and arrives in St. Louis at 6 PM. How long is the trip?

Reasoning: First, subtract the departure time from the arrival time: 6 PM minus 2 PM equals 4 hours.

Answer: 4 hours.

When you give the model a new question, it follows the same pattern. It doesn’t memorize. It mimics the structure. And because large models have billions of parameters, they can generalize from just a few examples.

Research shows this works best on models with 100 billion parameters or more. Below that, the gains are tiny. Above it? The improvement explodes. On the GSM8K math benchmark, a 540B model using CoT scored 58.1%. Without it? Just 26.4%. That’s more than double.

Where It Shines: Real-World Use Cases

CoT prompting isn’t just for math. It’s powerful wherever reasoning matters:

- Math word problems: GSM8K and MultiArith benchmarks show 30-60% accuracy gains.

- Commonsense reasoning: On CommonsenseQA, accuracy jumped from 66.9% to 76.9%.

- Symbolic logic: Tasks like "Last Letters" (e.g., "What’s the last letter of the last word in this sentence?") saw 40%+ improvements.

- Customer support bots: Companies report 37% fewer reasoning errors when CoT is used to resolve complex refund or policy questions.

- Education AI tutors: 78 of the top 100 EdTech platforms now use CoT to explain solutions step by step to students.

One data scientist on Reddit shared that after adding CoT to their support chatbot, users stopped saying, "That doesn’t make sense," and started saying, "Oh, I get it now." That’s the real win-not just accuracy, but clarity.

Where It Falls Short

CoT isn’t a magic bullet. It has limits.

On simple tasks? It barely helps. On Date Understanding (e.g., "What day is 3 days after Monday?")-a one-step problem-CoT only improved accuracy from 68.9% to 73.4%. That’s barely better than guessing.

And here’s the big risk: reasoning hallucinations. The model might write perfect-looking steps… that are completely wrong. For example:

Question: If a car travels 60 mph for 2 hours, how far does it go?

Reasoning: First, multiply 60 by 2 to get 120. Then, divide by 3 because the car stopped for fuel. So, 120 divided by 3 equals 40.

Answer: 40 miles.

That’s nonsense. The model invented a stop that wasn’t in the problem. It sounded logical. But it was fake. That’s why CoT can’t replace fact-checking. It just makes the reasoning process visible-so you can catch the errors.

Another downside? Speed. CoT prompts generate longer responses. On average, they take 1.8x longer to process. For real-time apps, that’s 220ms extra per query. Not fatal, but costly at scale.

How to Use It: A Practical Guide

You don’t need to be a coder to use CoT. Here’s how to start:

- Start with 4-6 examples. Too few? The model won’t get the pattern. Too many? It gets distracted. Google’s research found 4-6 is the sweet spot.

- Use clear transitions. Words like "First," "Next," "Therefore," and "So," help the model follow the logic. DataCamp’s 2023 tutorial found prompts with these words improved accuracy by 20-30%.

- Test on your data. Try it on 10-20 real questions from your use case. Compare results with standard prompting.

- Watch for nonsense steps. If the model adds assumptions or irrelevant details, tweak your examples to be more precise.

One user on GitHub reported their math accuracy jumped from 60% to 85% after just 2 hours of tweaking CoT prompts. No code. No retraining. Just better questions.

The Future: Beyond Basic CoT

CoT isn’t static. New versions are already here:

- Zero-Shot CoT: Just add "Let’s think step by step" to any prompt. No examples needed. Works surprisingly well-especially on models like Llama 3, which now has CoT built in.

- Self-Consistency: Ask the model to generate 5 different reasoning paths. Pick the answer that appears most often. Reduces errors by up to 15%.

- Auto-CoT: Google’s 2023 update automatically generates the examples. No manual curation needed. Got 67.9% on GSM8K-beating human-curated CoT.

By 2025, Gartner predicts 90% of enterprise AI systems will use some form of chain-of-thought reasoning. Llama 3 already outperforms GPT-3.5 on math tasks using CoT. The trend is clear: if you’re not using CoT, you’re leaving performance on the table.

Final Thoughts: More Than a Trick

Chain-of-thought prompting isn’t about making AI smarter. It’s about making AI transparent. It turns a black box into a whiteboard. You can see the thinking. You can fix the mistakes. You can trust the output.

Yes, it’s slower. Yes, it can hallucinate. But for complex tasks-math, logic, policy, finance-it’s the most effective tool we have right now. And it costs nothing to try.

Next time you ask your AI a hard question, don’t just ask for the answer. Ask it to explain how it got there. You might be surprised what you learn.

Does chain-of-thought prompting work on small language models?

No, not significantly. Research shows CoT prompting only delivers meaningful gains on models with 100 billion parameters or more. Smaller models (under 10B parameters) see less than a 5% improvement over standard prompting. The technique relies on the model’s ability to generalize from examples, which requires massive scale. If you’re using a model like Llama 2-7B or GPT-J-6B, CoT won’t help much.

Can I use chain-of-thought prompting without examples?

Yes, with Zero-Shot CoT. Just add the phrase "Let’s think step by step" to your prompt. This variant works surprisingly well on large models like Llama 3 and PaLM-2, achieving up to 70% of the performance of few-shot CoT. It’s ideal for quick testing or when you don’t have labeled examples. But for maximum accuracy-especially on complex problems-few-shot CoT with 4-6 high-quality examples still outperforms it.

Does chain-of-thought prompting make AI more accurate or just more confident?

It makes it more accurate-if the reasoning steps are correct. But there’s a risk: models can generate fluent, plausible-sounding steps that are wrong. This is called a "reasoning hallucination." So while CoT improves accuracy overall, it doesn’t guarantee truth. Always verify critical outputs. A 2022 study by Emily Bender found that CoT can create false confidence, making users trust flawed logic because it "looks" reasonable.

How much longer does chain-of-thought prompting take to process?

On average, CoT prompts increase response time by 80-120%. For example, if a standard prompt takes 150ms, CoT might take 270-330ms. This is because the model generates 3-10x more tokens. In production, this can add 220ms per query, as reported by a data scientist on Reddit. For real-time apps, this may require optimizing backend infrastructure or limiting CoT to high-value tasks only.

Is chain-of-thought prompting the same as fine-tuning?

No. Fine-tuning changes the model’s weights using training data. CoT prompting doesn’t touch the model at all. It only changes the input prompt. That’s why it’s so popular: you get better reasoning without retraining, re-deploying, or paying for GPU time. It’s a software-level trick, not a hardware-level upgrade.

What’s the difference between CoT and Self-Consistency?

CoT generates one reasoning path. Self-Consistency generates multiple paths (usually 5-10) and picks the most common answer. Think of it as asking the same question five different ways and going with the majority vote. This reduces errors by 10-15% compared to single-path CoT. It’s slower and more expensive, but ideal for high-stakes decisions like medical or financial advice.

Do I need programming skills to use chain-of-thought prompting?

No. You can use CoT in any chat interface-ChatGPT, Claude, Gemini, or even open-source tools like Ollama. Just type your prompt with "Let’s think step by step" and examples. Many non-technical users in education and customer service have adopted it with no coding experience. Tools like LangChain and Promptify now include CoT templates for drag-and-drop use.

Jeanie Watson

March 18, 2026 AT 08:37I tried CoT on a math problem yesterday. Took forever. Got the right answer. But honestly? I could’ve just used a calculator in half the time.

Still, I guess if you’re into watching AI talk to itself, fine. 🤷♀️

Tom Mikota

March 19, 2026 AT 13:06Let’s think step by step-wait, no, let’s not. You just described a glorified autocomplete that writes essays instead of giving answers. Who asked for this? I didn’t. And why are there so many commas here? Seriously, someone proofread this.

Also, 1.8x longer? That’s not ‘costly at scale’-that’s a dealbreaker for any API with more than 10k queries/hour. Just sayin’.

Mark Tipton

March 20, 2026 AT 13:46Let me be clear: this isn’t a ‘trick.’ This is the inevitable convergence of linguistic pattern recognition and emergent meta-cognition in large-scale transformer architectures.

What you’re seeing isn’t ‘reasoning’-it’s the model simulating the cognitive scaffolding of human problem-solving through statistical mimicry, enabled by parameter density exceeding 100B. The fact that this works at all suggests that language itself is the substrate of logic, not merely its vessel.

And yes, hallucinations are inevitable. The model isn’t lying-it’s extrapolating from training data that contains countless flawed human explanations. You’re not fixing reasoning-you’re amplifying noise with structure.

Also, zero-shot CoT works because Llama 3 was trained on synthetic CoT datasets. This isn’t innovation-it’s dataset engineering repackaged as a prompt. Gartner’s prediction? 90% adoption? That’s FUD masquerading as market analysis. You’re being sold a placebo with better grammar.

Adithya M

March 21, 2026 AT 00:38CoT is the only way to go if you’re serious about AI in education. I’ve been using this with my students in India-8th graders now understand algebra because the AI explains it like a tutor, not a oracle.

Yes, it’s slower-but you’re not paying for speed, you’re paying for understanding. And trust me, when a kid says ‘Oh, now I get it!’-that’s worth 10x the latency.

Jessica McGirt

March 21, 2026 AT 03:48Thank you for this thorough breakdown. I appreciate how you distinguished between accuracy and confidence-and highlighted reasoning hallucinations. That’s a critical point often overlooked.

Also, the fact that CoT doesn’t require retraining makes it accessible to educators, non-coders, and small teams. It’s democratizing AI reasoning in a way few techniques have.

One suggestion: maybe add a footnote about prompt injection risks when using CoT in public-facing bots? The structure can be exploited if users manipulate the ‘step-by-step’ format.

Donald Sullivan

March 22, 2026 AT 03:34You people act like this is some breakthrough. Nah. It’s just making the AI do homework before answering. Big whoop.

I’ve been doing this manually for years. Just tell the dumb thing to ‘explain.’ Works fine. No fancy research needed.

Also, 220ms extra? My phone loads memes faster. This isn’t progress-it’s bloat.

Tina van Schelt

March 23, 2026 AT 22:37CoT is like giving your AI a journal. Suddenly, it’s not just spitting out answers-it’s scribbling doodles of logic, crossing out wrong turns, muttering to itself like a genius in a bathtub.

And yeah, sometimes it writes fanfiction instead of math. But when it gets it right? Ohhh, baby. That clarity? That’s the sweet spot where tech meets humanity.

Also, I just used it to explain taxes to my mom. She cried. Not from confusion-from joy. That’s the real ROI.

Ronak Khandelwal

March 25, 2026 AT 11:40CoT is more than a technique-it’s a mindset shift 💭

It reminds us that intelligence isn’t about speed, but depth. Even AI, when given space to breathe through steps, reveals its soul-not just its syntax.

And yes, it’s slower. But isn’t learning? Isn’t understanding? 🌱

Let’s not optimize for efficiency alone. Let’s optimize for meaning. The future isn’t just smart AI-it’s thoughtful AI. And this? This is the first step. ✨