When you ask an LLM a question, it doesn’t just pull answers out of thin air. It looks through documents, finds relevant parts, and uses those to build its response. That process is called retrieval. But here’s the problem: if the system pulls the wrong piece of text-or splits a key idea into two chunks-you get hallucinations, wrong answers, or incomplete logic. The way you cut up text before retrieval makes or breaks this whole system. That’s where chunking strategies come in.

What Exactly Is Retrieval Chunking?

Chunking is the act of breaking long documents into smaller pieces so an LLM can process them within its limited context window. Think of it like cutting a book into chapters so you can read one at a time. But unlike a human, an LLM doesn’t understand context unless it’s handed the right pieces. If a sentence about a patient’s allergy gets separated from the diagnosis that follows, the model might say it’s safe to prescribe a drug it shouldn’t. That’s not just a mistake-it’s dangerous.

Early RAG systems used simple methods: take 256 words, move forward one sentence, repeat. It’s fast, but it’s blind. A paragraph about insulin dosing could end mid-sentence, and the next chunk starts with “and monitor glucose levels.” The model sees two unrelated fragments. No wonder hallucinations spike.

Sliding Window Chunking: The Old Standard

Sliding window chunking is still used in 41% of enterprise systems as of early 2025. It’s simple: divide text into fixed-size blocks-usually 256 words-with a small overlap (one sentence). It’s the go-to for developers because it requires no special tools. You don’t need embeddings, no vector databases, just a script.

But here’s the catch: it doesn’t care about meaning. A legal contract split between “the party shall pay” and “$50,000 within 30 days” becomes two meaningless fragments. Studies show this method achieves only 63.2% semantic coherence. That means nearly 1 in 3 retrieved chunks doesn’t preserve the original intent. It’s fast-4.7x faster than semantic methods-but if accuracy matters, it’s a gamble.

Semantic Chunking: When Meaning Matters

Semantic chunking changes the game. Instead of cutting by word count, it uses embedding models like OpenAI’s text-embeddings-3-small or SentenceTransformers to turn text into numbers. These numbers represent meaning. When the distance between two chunks’ vectors jumps past 0.65-0.75, the system knows: there’s a topic shift here. That’s where it cuts.

This method nails coherence. Benchmarks show 82.4% semantic accuracy. A 12-page research paper gets split at natural breaks: background, methods, results, conclusion. No more half-sentences. Fintech firms using this saw compliance retrieval accuracy jump from 68% to 89%. Reddit users reported hallucinations dropping from 34% to 19%.

But it’s not perfect. It’s slower-takes 2.3x longer than sliding window. And configuring it? Painful. Developers spend 15-40 hours tweaking threshold values, testing chunk sizes, and tuning embedding models. G2 reviews say 63% of negative feedback is about “complex configuration.” Still, it’s the standard for legal, medical, and financial use cases today.

LLM-Based Chunking: The High-Precision Option

What if you let the LLM itself decide where to cut? That’s LLM-based chunking. Models like GPT-4 analyze the text, identify key propositions, summarize sections, and highlight logical boundaries. The result? 91.7% coherence-the highest of any method.

One healthcare company used this to process clinical trial reports. Instead of losing a patient’s medication history across chunks, the model grouped all related info into one clean unit. Retrieval precision hit 92.1%.

But here’s the price tag: $0.045 per 1,000 tokens. For a system processing 10 million tokens a day, that’s $450 daily. NVIDIA’s analysis shows LLM chunking costs 14x more than semantic chunking. It’s powerful, but only for high-value, low-volume documents-like patent reviews or regulatory filings. Most companies can’t justify it.

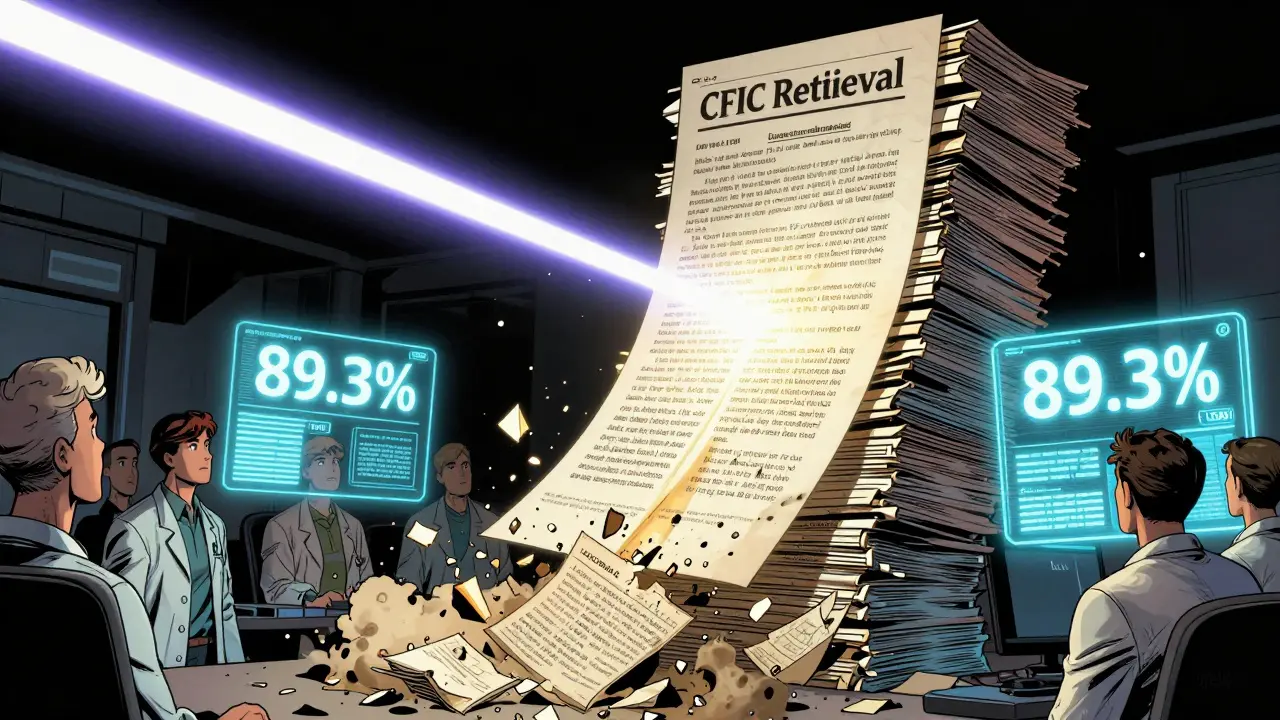

The New Kid: Chunking-Free In-Context (CFIC)

In June 2024, researchers at ACL published a paper that changed everything. Gao et al. introduced Chunking-Free In-Context (CFIC) retrieval. No chunks. No cuts. No segmentation.

Instead of slicing text, CFIC uses transformer hidden states to directly extract the exact evidence needed for a query. It doesn’t look at chunks-it looks at the whole document and pulls out only the relevant spans. Think of it like using a laser instead of scissors.

The results? 89.3% coherence, 37.8% less information bias, and 38% faster than semantic chunking. It avoids the biggest flaw of all chunking methods: splitting related ideas. In tests, 27.4% of traditional chunks contained irrelevant or misleading text. CFIC cuts that out entirely.

It’s not widely adopted yet-only 3.2% of enterprise systems use it as of Q1 2025. But it’s already integrated into 12 major RAG frameworks. Experts like Dr. Emily Chen at Google call it “the first real solution to information fragmentation.” The trade-off? It requires deep technical knowledge. Implementation difficulty is rated 4.9/5. But if you’re building mission-critical systems, this is the future.

Hybrid Approaches: The Real-World Winner

Most successful systems don’t pick one method. They mix them. A healthcare startup we studied used sliding window for clinical notes (fast, simple) and LLM-based chunking for research papers (precision matters). Result? 92.1% retrieval accuracy.

Another company used semantic chunking for legal contracts but added a second pass: Semantic Double Chunk Merging. This recombines nearby chunks that are semantically similar but got split by a transitional sentence. Milvus internal tests showed a 18.4% boost in coherence.

Forrester’s 2025 study found 57% of enterprises now use hybrid strategies. The pattern? Use fast, simple chunking for high-volume, low-stakes data. Use advanced methods for critical, complex content.

What’s Next?

The market is shifting fast. Semantic chunking adoption jumped from 28% in 2023 to 63% in 2025. Sliding window is fading. By 2027, Gartner predicts 78% of enterprise systems will use context-aware chunking. CFIC will grow, too-NVIDIA and Milvus are building hardware acceleration for it, expected in Q3 2025.

Regulations are pushing this too. The FDA’s 2024 guidance on AI-assisted medical documentation requires “chunking methodologies that preserve clinical context integrity.” That means semantic and CFIC methods aren’t just better-they’re becoming mandatory in regulated industries.

Choosing the Right Strategy

Here’s how to pick:

- Simple queries, high volume, low risk? Sliding window (256-word chunks, 1-sentence stride).

- Legal, medical, or financial documents? Semantic chunking (threshold 0.7, 1536-dim embeddings).

- High-value, low-volume, accuracy critical? LLM-based chunking (GPT-4 or Claude 3).

- Building the next-gen system? Experiment with CFIC. You’ll need a team with vector DB and transformer expertise.

And don’t forget: test everything. A 256-word chunk might work for one dataset but fail for another. Developers spend weeks tweaking this. There’s no magic number-only context.

What’s the biggest mistake people make with retrieval chunking?

The biggest mistake is assuming one chunking method works for all data. Using sliding window on legal contracts or research papers guarantees poor grounding. You need to match the method to the content type. A clinical note doesn’t need the same precision as a patent claim.

Does chunking size really matter that much?

Yes. Too small, and you lose context. Too large, and you flood the LLM with noise. Most teams test 5-12 different sizes before settling. The sweet spot? Usually between 128 and 512 tokens, depending on the document structure. Code blocks and tables often need their own chunking rules.

Can I skip chunking entirely?

Yes-and it’s the future. Chunking-Free In-Context (CFIC) retrieval bypasses chunking by directly extracting evidence from full documents. It’s not easy to implement yet, but it eliminates the core flaw of all chunking: artificial fragmentation. If you’re building a high-stakes system, CFIC is worth the investment.

Why is LLM-based chunking so expensive?

Because it uses powerful models like GPT-4 to analyze every document before retrieval. Each 1,000 tokens costs $0.045. For a system handling 1 million documents a month, that’s $45,000 in processing fees alone. It’s only worth it if accuracy directly impacts revenue or safety-like in drug approval reviews or financial audits.

What’s the easiest way to start improving my LLM grounding?

Switch from sliding window to semantic chunking. Use open-source tools like LangChain or Weaviate. Start with a 256-token chunk size and a cosine similarity threshold of 0.7. Test it on 100 documents. You’ll likely see hallucinations drop by 10-20% within hours. No need to overcomplicate it.

Wilda Mcgee

February 8, 2026 AT 18:16Okay but let’s be real - sliding window chunking is like using a butter knife to do brain surgery. I’ve seen teams waste weeks debugging hallucinations only to realize they’re using 256-word chunks on medical records. Switching to semantic chunking with a 0.7 threshold was a game-changer for us. Hallucinations dropped 22% in two days. No magic, just better context. And hey - if you’re using LangChain, try the SentenceTransformer embedder. It’s free, open-source, and doesn’t make you cry at 2 a.m. 😌

Jen Becker

February 9, 2026 AT 09:33CFIC is just hype. Everyone’s chasing the shiny new thing. Sliding window works fine for 90% of use cases. Stop overengineering.

Ryan Toporowski

February 10, 2026 AT 10:34Love this breakdown! 🙌 I’ve been using hybrid chunking for our legal docs - semantic for contracts, sliding for internal memos. Total game changer. Also, shoutout to Weaviate - their UI is actually usable now. No more CLI nightmares. 💪

Samuel Bennett

February 12, 2026 AT 02:20Wait - you’re telling me GPT-4 costs $0.045 per 1k tokens? That’s a scam. OpenAI’s pricing is a pyramid scheme. And don’t even get me started on ‘semantic chunking’ - embeddings are just fancy word salad. Real engineers use regex and offsets. This whole field is built on hype and PhDs who’ve never deployed a real system.

Rob D

February 13, 2026 AT 10:44CFIC? Nah. We’re not some Silicon Valley startup with a $500k budget. We run on AWS Lambda and a Raspberry Pi. Sliding window is king. And if you think semantic chunking is better, you’ve never tried to debug a 12GB PDF with 37 tables and 89 footnotes. Real-world data doesn’t care about your cosine similarity thresholds. Also - FDA? LOL. They still use fax machines. You think they’re auditing your chunking? Wake up. America runs on simple, dumb, reliable code. Not AI fairy dust.