When you ask an AI chatbot for advice, it remembers your last question. It might use your words to get better. It might store your name, your location, your medical symptoms - even if you said "no" to data collection. Most people don’t realize this. And that’s the problem.

Why Consent for LLMs Is Not Like Cookies

For years, websites asked for cookie consent with a simple banner: "Accept all" or "Reject all." It was clunky, but it worked for basic tracking. LLM-powered apps - like AI assistants, customer service bots, or personalized writing tools - need something far more complex. You’re not just letting them place a cookie. You’re giving them your thoughts, your habits, your private conversations. And those inputs can be used to train the model, improve its responses, or even build a profile of you - all without your clear understanding.Traditional consent tools like OneTrust or Osano were built for cookies and form submissions. They weren’t designed for real-time AI interactions. That’s why 67% of LLM apps still ignore user consent preferences during training, according to MIT’s January 2026 audit. If you say "don’t use my data for training," but the system still feeds your chat into the model’s next update, that’s not compliance. That’s betrayal.

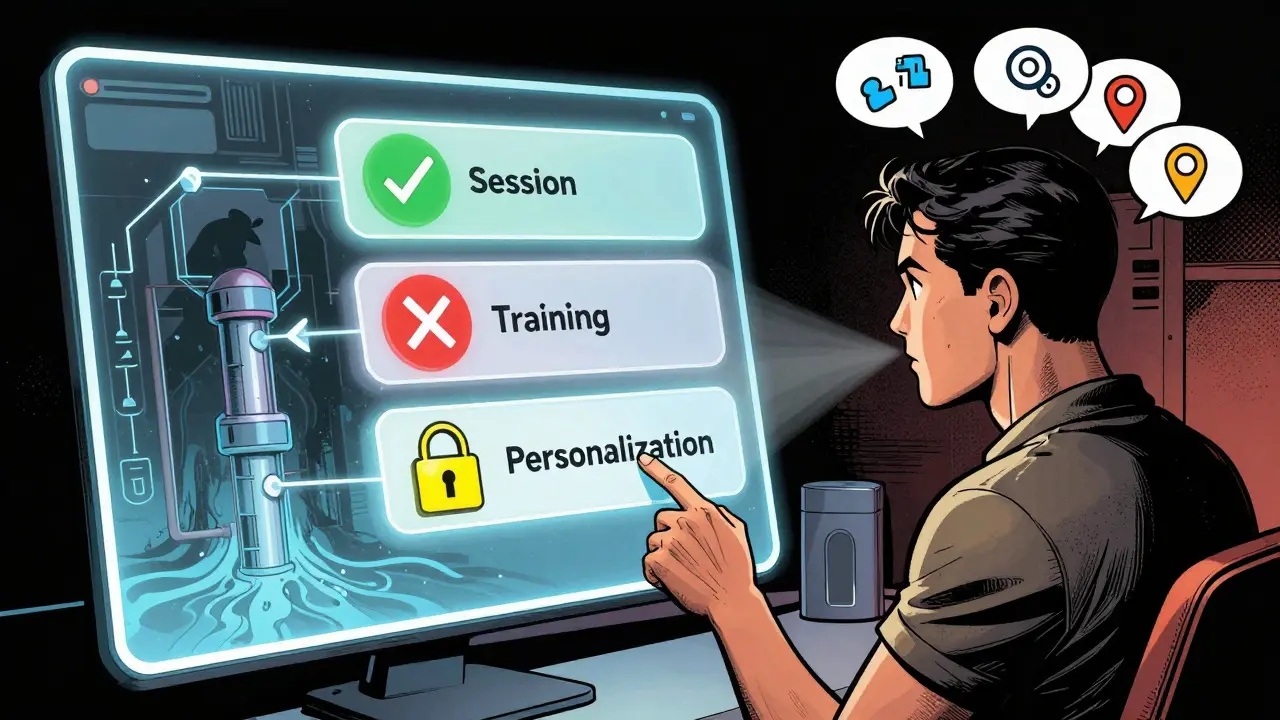

The Three Levels of Consent in LLMs

You can’t just say "yes" or "no" to everything. LLMs need three distinct consent layers:- Session consent: Is it okay to use your input right now to generate a reply? This is the bare minimum. Most apps get this right.

- Training consent: Can your conversation be used to improve the model? This is where most systems fail. Even if you opt out, your data often slips into training sets.

- Personalization consent: Can your history be saved to make future responses more tailored? This sounds helpful - until you realize your private health details or financial questions are stored indefinitely.

Without these distinctions, consent is meaningless. A single toggle doesn’t cut it. Users need to understand the difference between a quick answer and long-term data use. Microsoft’s Azure AI Consent Framework showed a 42% jump in user understanding when prompts explained these differences in plain language during natural pauses in conversation - not buried in a legal page.

What Happens When Consent Is Ignored

The consequences aren’t theoretical. In late 2025, a healthcare startup using an LLM to triage patient symptoms was fined under GDPR after it was discovered that 14,000 patient conversations were used to train a public model - even though users had checked "no" on the consent screen. The system didn’t block the data from entering the training pipeline. It just ignored the setting.It’s not just legal risk. Users are angry. Trustpilot reviews for major AI platforms show a 2.8/5 average for consent management - far below the 4.1/5 for regular websites. On Reddit, users complain: "I clicked ‘no’ to data collection but my conversation history still appears in my account." And they’re right to be upset. The European Data Protection Board has already said that training LLMs on non-consented personal data likely breaks GDPR Article 6. But no one’s enforcing it yet.

The Technical Gap

Building consent into an LLM isn’t plug-and-play. You need to integrate checks at three points:- Before the request: Is the user allowed to use this feature? Check their consent status.

- During processing: If they said "no personalization," strip out names, addresses, and identifiers before sending data to the model.

- After the response: Did they say "delete my data"? Then purge it from logs, caches, and training queues - not just the frontend.

Most platforms skip step two and three. Even top vendors like OneTrust and Osano only added experimental LLM modules in late 2025. Their systems still treat AI consent like cookie consent. The result? A 15-25% drop in response quality when strict rules are applied - because the AI can’t use context it needs to be helpful. But that’s not a reason to skip compliance. It’s a reason to build smarter systems.

Real-World Fixes Are Already Here

Some companies are getting it right. Privado AI’s 2025 update added 17 LLM-specific checks - including real-time detection of consent violations across apps and verification that data isn’t slipping into training sets. Osano’s UX team found that users were 37% more satisfied when consent explanations appeared mid-conversation, like: "This chat won’t be saved or used to train the AI. Want to continue?"And the tech is evolving fast. In December 2025, the IAB Tech Lab released TCF 2.0 - the first consent standard with five new fields just for AI data use. OneTrust is planning a Q2 2026 update called "Contextual Consent for Generative AI," which will explain data usage in natural language during chats. NIST’s January 2026 draft recommends continuous consent verification - not just a one-time click.

But adoption is still slow. Only 28% of enterprises with LLMs have proper consent systems. Financial services and healthcare lead - because they’re forced to. Retail and media lag behind. Why? Because they think they can wait. They can’t.

The Coming Regulatory Wave

The EU’s AI Act, expected in Q2 2026, will require "human-in-the-loop" verification for high-risk AI systems. That means if your LLM handles medical, legal, or financial advice, you’ll need active user confirmation - not passive consent - before processing sensitive data.California’s Privacy Protection Agency just drafted rules requiring "specific, granular consent for AI training data." That’s a direct hit to the "we anonymize everything" excuse. Anonymization doesn’t work with LLMs. Studies show models can still memorize and regurgitate unique phrases from training data - even if names are removed.

And it’s not just Europe and California. The U.S. federal government is watching. Professor Hoda Eldardiry told the Senate in December 2025: "The current notice-and-consent paradigm fails spectacularly in LLM contexts." She’s not wrong. You can’t ask a user to read a 10-page privacy policy before asking an AI how to treat a rash.

What You Should Do Right Now

If you’re building or using an LLM app, here’s what to do:- Break consent into three clear options: Session, Training, Personalization. Don’t lump them.

- Explain choices in context: Don’t show a banner. Show a short, clear message during natural pauses in conversation.

- Enforce consent at the API level: Use tools like Privado AI or Osano’s new SDK to block data from entering training pipelines.

- Allow instant deletion: If a user says "delete my data," remove it from logs, caches, and training queues - not just their profile.

- Test your system: Audit your LLM pipeline. Can you prove consent preferences are respected? If not, you’re at risk.

Don’t wait for a fine. Don’t wait for a lawsuit. Users are already leaving platforms they don’t trust. And regulators are catching up.

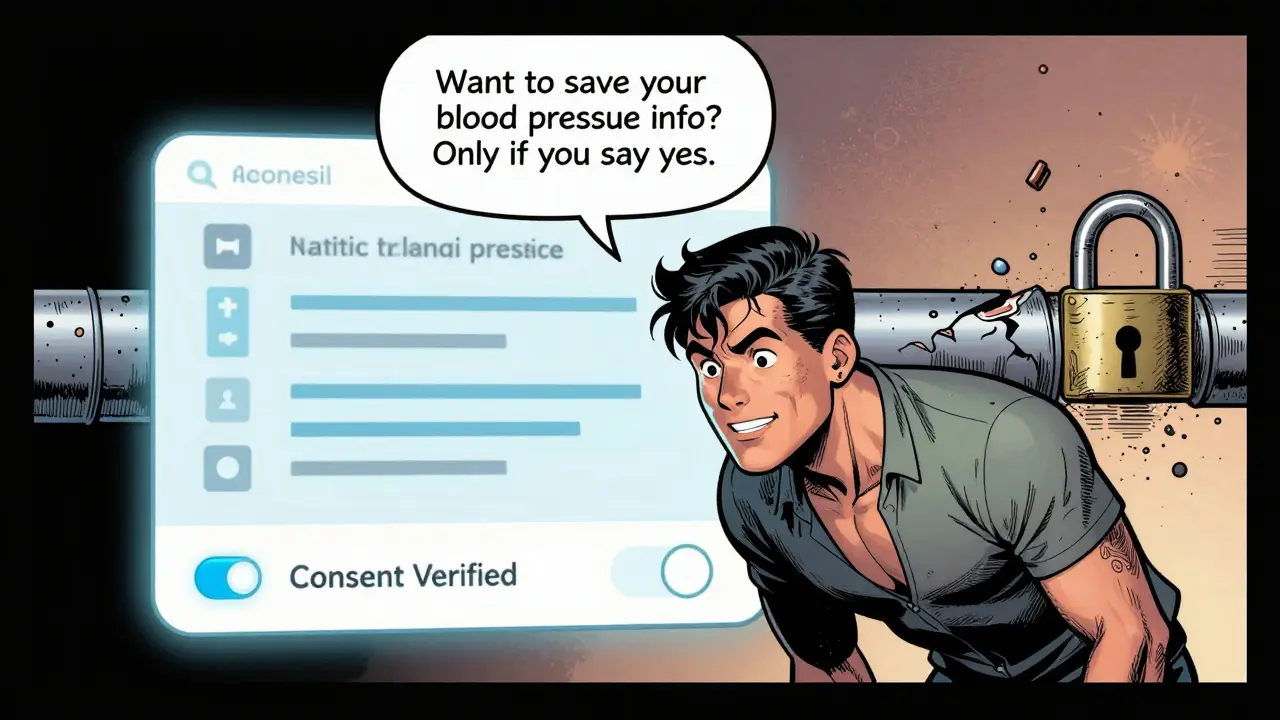

What’s Next?

By 2027, Gartner predicts 60% of leading AI platforms will use conversational consent - where the AI explains data use in real time, like a human would. Imagine this:"I noticed you mentioned your blood pressure. To help you better next time, I can save this info - but only if you say yes. If you say no, I’ll forget it after this chat. Want to save it?"

That’s not science fiction. That’s the future. And it’s coming fast.

The old model - one checkbox, one click, endless data collection - is dead. The new model is ongoing, contextual, and respectful. If you’re still using the old way, you’re not just non-compliant. You’re out of step with what users expect - and what the law will soon demand.

Can I trust LLM apps that say they "anonymize" my data?

No. Anonymization doesn’t work reliably with LLMs. Studies show models can memorize unique phrases, patterns, or even exact sentences from training data - even after removing names or IDs. If your data was used to train the model, it could still be reconstructed in responses. True privacy requires explicit consent and technical enforcement, not just data scrubbing.

What happens if I opt out of training but the AI still uses my data?

If your consent choice is ignored, the company is violating privacy laws like GDPR and the upcoming California regulations. You can file a complaint with your local data protection authority. In the U.S., that’s the state attorney general’s office or the CCPA enforcement unit. In Europe, it’s your national DPA. Keep screenshots of your consent settings and the chat logs as evidence.

Are there tools that actually enforce LLM consent?

Yes, but they’re still new. Privado AI, Osano’s LLM Consent Verification SDK, and Robust Intelligence’s AI governance platform now include real-time consent enforcement for LLM pipelines. These tools check data before, during, and after processing to block violations. They’re not perfect, but they’re the only ones that go beyond cookie banners.

Why do some AI apps ask for consent every time I chat?

That’s called "consent fatigue." It happens when companies don’t store your preference properly or use separate sessions for each interaction. Good systems remember your choice across sessions. If you’re being asked repeatedly, it’s a sign the system isn’t built right - not that you need to give consent again.

Does the EU AI Act apply to me if I’m in the U.S.?

Yes - if your AI app is used by people in the EU. The AI Act applies to any system that processes data of EU residents, regardless of where the company is based. If you have even one EU user, you need to comply. Many U.S. companies are redesigning their consent flows globally just to avoid legal complexity.