Most large language models (LLMs) today sound smart because they’ve memorized tons of text. But what happens when the facts change? Or when you need answers based on your company’s internal documents, customer support logs, or product manuals? That’s where retrieval augmentation comes in. It’s not about training a new model. It’s about giving your existing open-source LLM a way to look things up-like a super-powered research assistant.

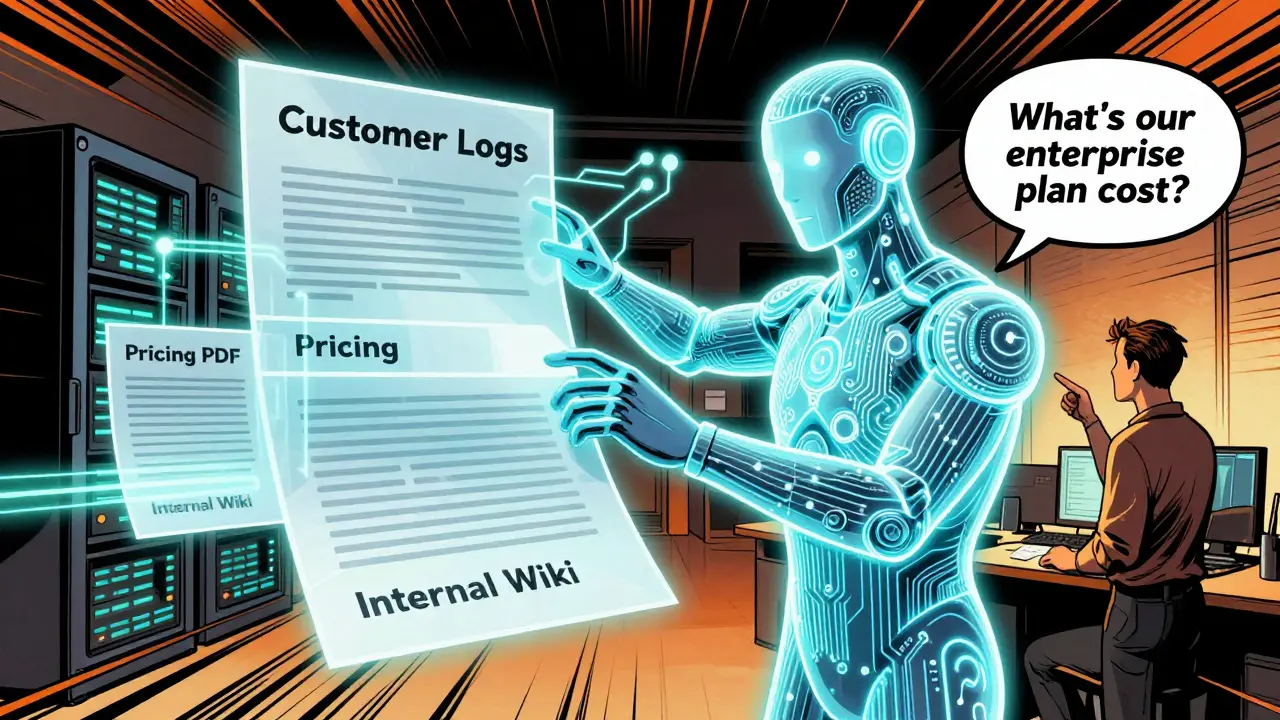

Imagine asking an AI: "What’s the latest pricing for our enterprise plan?" If the model only knows what it learned during training, it might guess. Maybe it’s wrong. Maybe it makes something up. But with retrieval-augmented generation (RAG), it pulls the answer directly from your latest PDF, not from its memory. That’s the difference between guessing and knowing.

How RAG Works: Three Simple Steps

RAG isn’t magic. It’s a three-step pipeline that turns an LLM from a black box into a fact-checking machine.

- Retrieval: When you ask a question, the system first converts it into a numerical vector-a list of numbers that represent what your question means. Then it searches through a database of pre-indexed documents (like your internal wikis, manuals, or even scraped web pages) to find the most similar vectors. Think of it like Google Search, but for vectors instead of keywords.

- Augmentation: The top 3-5 most relevant snippets are pulled out and added right before your original question. This becomes the new prompt fed to the LLM. For example: "Based on this document about our 2026 pricing tiers, answer the following: What’s the cost of the enterprise plan?"

- Generation: The LLM now has context it didn’t have before. It doesn’t just rely on its training. It uses the retrieved info + its own knowledge to generate a precise, grounded answer.

This is why RAG cuts down on hallucinations. If the model doesn’t know something, it doesn’t invent it-it says "I don’t know" or points to the source you gave it. That’s huge for businesses.

Why Open-Source LLMs? Flexibility Over Black Boxes

You could use a paid API like GPT-4 or Claude. But those models don’t let you connect them to your private data. You’re stuck with whatever they were trained on-public internet stuff, mostly outdated by now.

Open-source LLMs like Llama 3, a family of open-weight large language models released by Meta in 2024, with context windows up to 128K tokens and fine-tuned versions optimized for reasoning and coding, Mistral 7B, a compact but high-performing open-source model from Mistral AI, designed for efficiency on consumer hardware, or Phi-3, a small language model from Microsoft trained on high-quality data, optimized for mobile and edge deployment let you run them on your own servers. You control the data. You control the security. You control the cost.

That’s why enterprises and startups alike are shifting to open-source + RAG. You’re not buying a service. You’re building a system.

The Open-Source Tooling Stack: What You Actually Need

Building a RAG system doesn’t mean writing code from scratch. There’s a whole ecosystem of open-source tools that do the heavy lifting.

- LangChain, an open-source framework that connects LLMs with data sources, embedding models, and retrieval pipelines, enabling modular and customizable RAG architectures is the Swiss Army knife. It lets you chain together your vector database, your embedding model, and your LLM with simple Python code. Need to pull data from Notion? From a SQL database? From a folder of PDFs? LangChain has connectors for all of it.

- vLLM, a high-throughput open-source inference engine for LLMs that uses Paged Attention to efficiently manage memory, reducing latency and enabling faster responses in RAG systems handles the generation part. It’s faster than standard Hugging Face inference servers. It’s what powers real-time chat apps that don’t lag.

- Chroma, an open-source vector database designed for embedding storage and similarity search, optimized for local and cloud deployment with minimal setup, Qdrant, a scalable open-source vector database with built-in filtering, geo-search, and efficient indexing for RAG applications, and Weaviate, a vector search engine that supports hybrid search combining vector and keyword queries, ideal for complex RAG use cases store your documents as vectors. You don’t need a full database team. These are designed for developers.

- Embedding Models, specialized neural networks that convert text into numerical vectors, such as Sentence-BERT, OpenAI’s text-embedding-3-small, or the open-source nomic-embed-text model are the translators. They turn "What’s our refund policy?" into a list of 384 numbers. Then they compare it to other vectors in your database. Popular open-source ones include nomic-embed-text, a lightweight, open-source embedding model from Nomic AI, optimized for accuracy and low resource usage and BGE, a family of embedding models from Beijing Academy of Artificial Intelligence, achieving state-of-the-art performance on retrieval benchmarks.

You don’t need all of them. But you need at least one vector database, one embedding model, and one way to connect them to your LLM. LangChain + Chroma + Llama 3 is a common, lightweight stack that runs on a single GPU or even a high-end laptop.

Best Practices: What Actually Works

It’s not enough to plug in tools. You have to build smart.

- Start small. Don’t index your whole company’s data on day one. Pick one document type-maybe customer support tickets or product specs. Test it. Measure accuracy. Then expand.

- Quality over quantity. A clean, well-formatted PDF with clear headings beats 100 messy Slack exports. Clean data = better retrieval. Use tools like Unstructured, an open-source library for parsing complex documents (PDFs, emails, HTML) into structured text for RAG indexing to clean up messy inputs.

- Chunking matters. Breaking documents into small pieces (512-1024 tokens) helps retrieval. Too big? The LLM can’t focus. Too small? You lose context. Test 256, 512, and 1024 token chunks. See what gives you the best answers.

- Use metadata. Tag your documents: "department=finance", "last_updated=2026-01-15", "source=internal_wiki". That lets your retriever filter results. No point pulling a 2023 policy if you have a 2026 update.

- Test with real questions. Don’t just use "What is RAG?" Use real user queries from your support logs. See what the system gets wrong. Then fix the data, not the model.

Open-Domain vs Closed-Domain: Know Your Use Case

Not all RAG is the same.

Open-domain RAG pulls from the public web. Think customer-facing chatbots that answer questions like, "What’s the weather in Asheville?" or "When did SpaceX launch?" Here, you might use a public API like Google Search or a cached web index. Accuracy is nice, but not critical.

Closed-domain RAG is where the real value is. This is for internal systems-HR bots, IT help desks, legal document assistants. All data stays inside your firewall. You index your Confluence, your Notion, your Salesforce records. No public data. No privacy risk. This is where RAG becomes a competitive advantage.

Red Hat’s OpenShift AI and NVIDIA’s reference architectures are built for closed-domain. They assume your data is sensitive. They’re designed for air-gapped environments. That’s the future.

Performance and Cost: What to Expect

Running RAG isn’t free. But it’s cheaper than you think.

- Embedding models like nomic-embed-text use under 1GB of RAM. You can run them on a $10/month cloud server.

- Chroma and Qdrant run on SQLite or PostgreSQL. No need for expensive databases.

- vLLM cuts inference latency by 3x compared to standard LLM servers. That means fewer GPUs needed.

- A full stack (Llama 3 + LangChain + Chroma) can run on a single NVIDIA RTX 4090. No cloud bills. No API limits.

Compare that to GPT-4 Turbo at $0.03 per 1K tokens. If your chatbot handles 10,000 queries a month? That’s $300. With open-source? Maybe $15 a month in cloud costs. That’s a 95% drop.

What’s Next? The Future of RAG

RAG isn’t static. The next wave is agentic RAG.

Instead of just retrieving and answering, systems are starting to:

- Break one question into multiple sub-queries.

- Use memory to remember what worked in past retrievals.

- Auto-correct bad queries: "Tell me about our pricing" → "Show me the latest pricing for enterprise plans in North America, updated after Jan 1, 2026."

- Chain multiple retrievals: First get policy docs, then check support tickets, then compare with FAQ.

This isn’t science fiction. It’s happening in labs and early adopters. LangChain already supports multi-step workflows. The tools are here. It’s just not widespread yet.

Final Thought: RAG Is the Bridge

Open-source LLMs are powerful. But they’re also blind to your world. RAG is the bridge. It connects the raw intelligence of a model to the real, changing, messy data of your business.

You don’t need to be an AI expert. You don’t need to retrain models. You just need to index your documents, pick the right tools, and test with real questions. The rest? The open-source community has already built it for you.

Can I use RAG with any open-source LLM?

Yes. RAG works with any LLM that accepts text prompts. That includes Llama 3, Mistral 7B, Phi-3, and even smaller models like Gemma. The key is compatibility with embedding models and retrieval frameworks like LangChain. You don’t need to modify the LLM itself-just feed it augmented prompts.

Do I need a GPU to run RAG?

Not always. For small-scale use-like a team of 10 people-you can run embedding models and lightweight LLMs (like Phi-3) on a CPU. But for production use with 100+ concurrent users, a GPU (even a single RTX 4090) is strongly recommended. vLLM’s Paged Attention makes GPU usage much more efficient, reducing the need for multiple high-end cards.

How do I know if my RAG system is working well?

Measure three things: accuracy (does the answer match your source docs?), relevance (is the retrieved info actually useful?), and latency (how fast does it respond?). Set up a simple test suite with 50 real user questions. Track how often the system hallucinates or pulls outdated info. If more than 5% of answers are wrong, go back to your data indexing.

Is RAG better than fine-tuning the LLM?

It depends. Fine-tuning changes the model’s weights-it’s permanent and expensive. RAG changes the input-it’s dynamic and cheap. If your data changes weekly (like pricing, policies, or product specs), RAG wins. If you need the model to understand jargon or tone (like legal writing), fine-tuning helps. Most teams use both: RAG for facts, fine-tuning for style.

What’s the biggest mistake people make with RAG?

They focus on the LLM instead of the data. A perfect LLM with junk documents gives you junk answers. The most common failure? Indexing PDFs without extracting text properly. Or not cleaning up duplicate, outdated, or poorly formatted files. Your RAG system is only as good as the data you feed it.

Can I use RAG without coding?

Not really. You’ll need to write at least a little Python to connect the tools. But there are UIs like LlamaIndex, an open-source data framework for connecting LLMs to external data sources, with tools for indexing and querying and Haystack, a Python-based open-source framework for building search and RAG pipelines with modular components that reduce the code needed. Still, you’ll need someone who can debug pipelines and interpret logs.

Veera Mavalwala

February 21, 2026 AT 22:19Let me tell you something - RAG isn’t just a buzzword, it’s the only thing keeping enterprise AI from becoming a glorified fortune cookie machine. I’ve seen teams dump 20,000 PDFs into a vector DB without cleaning them, then wonder why their bot says ‘our pricing is $49/month’ when the latest doc says $149. The model isn’t broken. The data is a dumpster fire. And no, ‘chunking’ isn’t some fancy tech term - it’s just cutting your documents into bite-sized pieces so the AI doesn’t choke on a 50-page contract written in 1998 font. You want accuracy? Start with a single folder of cleaned support tickets. Not your entire SharePoint. Not your Slack archive. One. Folder.

And don’t even get me started on people using GPT-4 for closed-domain stuff. You’re handing your HR policies to a black box that trained on Reddit threads from 2021. Open-source LLMs? They live on your server. Your data stays locked behind your firewall. That’s not innovation - that’s basic hygiene.

LangChain? Sure, it’s the Swiss Army knife. But half the people using it don’t know what an embedding is. They just copy-paste a Colab notebook and call it a day. Then they panic when the system starts hallucinating about ‘Q3 revenue projections’ that don’t exist. The magic isn’t in the framework. It’s in the metadata. Tag your docs. Date them. Label them. If you can’t tell which version of the policy you’re feeding the AI, you deserve to get fired.

Ray Htoo

February 21, 2026 AT 23:20This is the most lucid breakdown of RAG I’ve read in months. I’ve been tinkering with Llama 3 + Chroma on a Raspberry Pi 5 just to see if it’s possible - and it is. Not fast, not pretty, but it works. The real game-changer for me was realizing I don’t need to fine-tune anything. Just index our internal SOPs, slap on nomic-embed-text, and suddenly the AI knows our internal lingo without me having to retrain a 7B parameter model from scratch. It’s like giving a genius a flashlight in a dark room - suddenly they see what you see.

Also, the chunking advice? Spot on. We tried 2048-token chunks first. The AI kept mixing up product features from two different releases. Went down to 512 and boom - accuracy jumped from 62% to 94%. Sometimes the simplest fix is the one nobody talks about.

Kieran Danagher

February 23, 2026 AT 07:23Someone’s clearly been reading the same blog posts I’ve been screaming at my team about for six months. Finally, someone says it out loud: the LLM is the least important part. The data is everything. I’ve seen companies spend $200k on GPUs and then feed the system scanned PDFs of handwritten meeting notes. You don’t need a Tesla to drive on a dirt road. You need a shovel.

And yes - vLLM is a godsend. We cut our latency from 4.2s to 1.1s just by switching from Hugging Face’s default server. No code changes. Just swap the inference engine. It’s like upgrading from dial-up to fiber without changing your ISP.

Shivam Mogha

February 24, 2026 AT 10:17Sheila Alston

February 25, 2026 AT 12:41How can you even trust open-source models? I mean, who’s auditing the weights? What if someone slipped in a backdoor during training? I read a paper last year that showed how a single poisoned sample could make a model give the wrong answer to any question about ‘government policy’ - and no one checks the training data. This whole ‘run it on your server’ thing sounds great until you realize you’re now responsible for the security of an AI that might be secretly anti-corporate. I’m not saying it’s evil - I’m saying you’re naive if you think it’s safe.

And don’t get me started on LangChain. It’s like letting a toddler drive a forklift. You think you’re in control, but one wrong import and suddenly your whole pipeline is trying to retrieve data from a dead GitHub repo from 2019. It’s chaos dressed up as innovation.

Natasha Madison

February 26, 2026 AT 06:07Oh, so now we’re supposed to trust open-source models because they’re ‘on our server’? Please. Who wrote the code? A guy in Ukraine? A grad student in Bangalore? How do we know there isn’t a hidden trigger phrase that makes it leak data when someone says ‘I am the admin’? I’ve seen what happens when companies think ‘open-source = secure.’ They end up on the front page of TechCrunch with a headline like ‘Company Leaks All Employee Records via AI Bot.’

And don’t tell me about ‘metadata tagging.’ That’s just window dressing. If you’re indexing documents, you’re still storing them. And storage means attack surface. You think your IT team can secure a vector database? They can’t even keep the coffee machine from crashing the network. This isn’t progress. It’s a liability waiting to happen.

sampa Karjee

February 26, 2026 AT 22:51Let’s be honest - this entire RAG movement is just a distraction from the fact that open-source LLMs still can’t do basic math. You think indexing your HR docs will make it understand tax codes? Please. I’ve tested Phi-3 on a simple calculation: ‘If an employee earns $75,000 and gets a 12% raise, what’s their new salary?’ It said $83,000. The correct answer is $84,000. A child could do this. A model trained on Reddit cannot. RAG doesn’t fix fundamental incompetence - it just hides it behind a fancy retrieval layer.

And LangChain? That’s not a framework. It’s a graveyard of abandoned GitHub repos. Half the plugins don’t work anymore. The documentation is outdated. The community is a ghost town. You’re not building a system - you’re assembling a Frankenstein monster from dead code. And then you wonder why it explodes during production.

OONAGH Ffrench

February 27, 2026 AT 05:24poonam upadhyay

February 28, 2026 AT 05:35Okay but have you even thought about how many people are just blindly copying this ‘LangChain + Chroma + Llama 3’ stack like it’s some sacred ritual? I’ve seen interns set this up and then leave the server exposed on port 8000 with no auth because ‘it’s just for testing.’ And now your entire internal wiki is indexed in a public vector DB because someone thought ‘oh, it’s open-source so it’s safe.’

And don’t even get me started on embedding models - nomic-embed-text? That’s fine if you’re indexing cat memes. But try using it on legal contracts written in legalese and suddenly your similarity search is returning ‘refund policy’ when you asked about ‘non-compete clauses.’ The model doesn’t understand context - it just finds numbers that are kinda close. You think that’s intelligence? That’s statistical luck.

And then there’s the ‘start small’ advice. Yeah, right. Your ‘small’ is always someone else’s ‘critical system.’ You index one folder - then you need two. Then you need the whole CRM. Then you need the ERP. Then you need the backups. Then you’re spending $12k/month on cloud GPUs because you didn’t plan ahead. RAG isn’t cheap. It’s a slow-burning financial hemorrhage disguised as innovation.

Patrick Sieber

March 1, 2026 AT 04:24I’ve built three RAG systems in production. One for legal docs, one for engineering specs, one for customer onboarding. The biggest win? Not the tech. It’s the process. Before RAG, people were emailing PDFs around like it was 2008. Now? They just ask the bot. And it answers. Accurately. From the right version. No more ‘I think the policy says…’ or ‘I swear it was on the intranet last week.’

Yes, it needs maintenance. Yes, chunking matters. Yes, metadata is non-negotiable. But that’s not a flaw - that’s responsibility. And if you’re too lazy to label your documents, maybe you shouldn’t be in charge of knowledge management.

Also - running this on a single RTX 4090? It’s not just possible. It’s cheaper than a coffee subscription. I’ve seen teams spend more on GPT-4 API calls in one month than on their entire server rack for a year. The math isn’t even close.