Most people think the secret to a powerful AI is the architecture of the model. They focus on parameters, layers, and compute power. But here is the truth: a model is only as smart as the data it eats. If you feed a model a trillion tokens of internet scrapings filled with typos, hate speech, and outdated facts, you aren't building an intelligence; you're building a mirror of the internet's worst habits. This is where Data Curation is the systematic process of collecting, cleaning, and organizing data to ensure it is fit for training generative AI models. It is the difference between a bot that hallucinates and one that provides reliable, expert-level insights.

Why "More Data" is a Dangerous Strategy

In the early days of Large Language Models, the trend was simply "more is better." The goal was to ingest the entire web. However, we've learned that volume without quality leads to bias amplification. When a model sees the same social prejudice or factual error a million times in a raw dataset, it doesn't just learn the pattern-it amplifies it, treating those biases as absolute truths. To stop this, we need to move from passive collection to active curation.

The risk is real. If your training corpus contains skewed demographics or historical inaccuracies, the model will reflect those gaps. For instance, if a medical AI is trained on data primarily from one region, its diagnostic suggestions for other populations will be unreliable. Effective curation ensures the dataset is comprehensive and balanced, preventing the AI from developing "blind spots" that could lead to harmful or unfair outcomes.

The Three Pillars of the Curation Workflow

Building a high-quality corpus isn't a single task; it's a pipeline. To get a dataset that actually improves model performance, you have to move through three distinct technical phases.

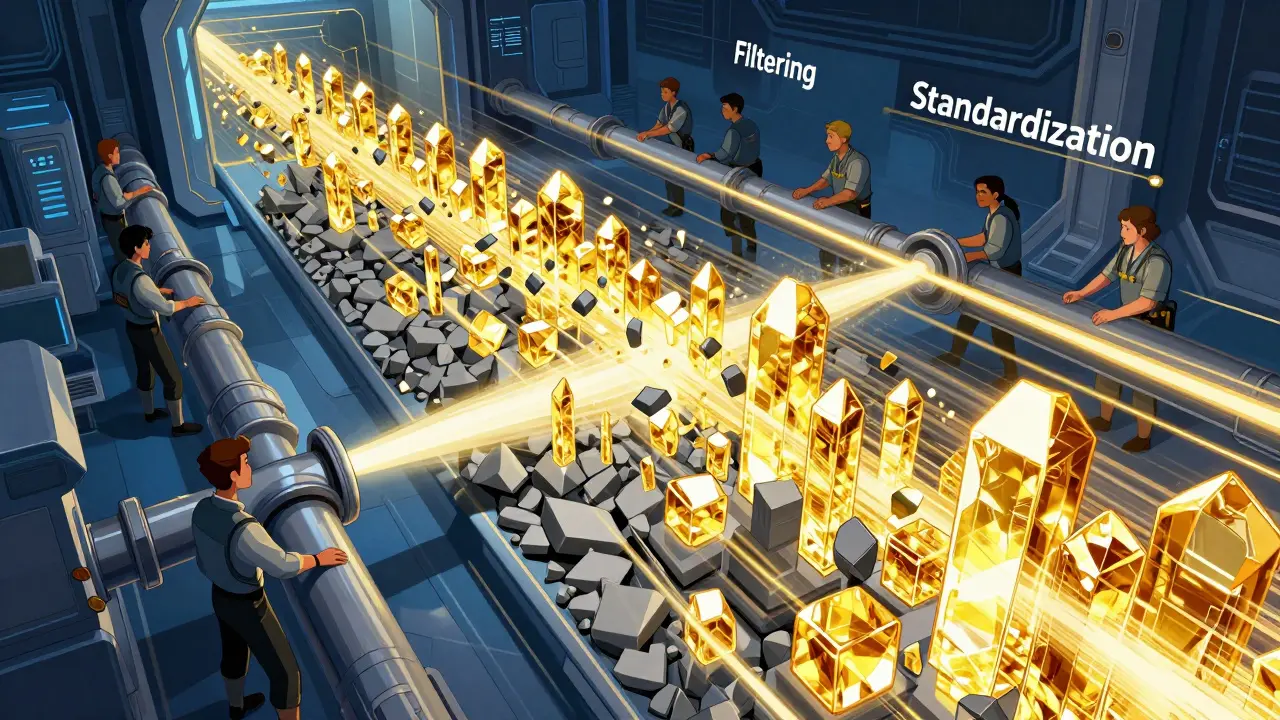

1. Standardization and Formatting

Raw data is messy. It comes with HTML tags, weird line breaks, and inconsistent encoding. The first step is to strip away the noise. This means normalizing spaces, removing boilerplate text from websites, and ensuring that a document from 2010 looks the same to the model as one from 2026. If you leave in the "Click here to subscribe" fragments from a scraped blog, the model might start thinking those phrases are part of natural human conversation.

2. Filtering and Selection

This is where you decide what stays and what goes. You need to aggressively remove spam, low-quality "content farm" text, and duplicates. Duplicate data is particularly dangerous because it over-represents certain ideas, which is a direct path to bias amplification. You also want to filter out "noisy" data-sentences that are too short or grammatically broken for the model to learn any meaningful linguistic patterns from.

3. Cleansing and Quality Improvement

Once you have the right data, you have to make it precise. This involves fixing typos and standardizing technical jargon. For example, in a corporate dataset, you might see "AI," "Artificial Intelligence," and "Machine Learning" used interchangeably. A curator decides whether to keep those variations for linguistic diversity or unify them for conceptual clarity. It also involves scrubbing PII (Personally Identifiable Information) to ensure the model doesn't accidentally memorize and leak someone's home address or phone number.

| Feature | Manual Curation | AI-Optimized Curation |

|---|---|---|

| Scalability | Very Low (Human-dependent) | Very High (Algorithm-driven) |

| Speed | Slow / Iterative | Real-time / High-velocity |

| Accuracy | High (Nuanced) | Variable (Pattern-based) |

| Cost | High per data point | Low per data point |

Modern Techniques for High-Fidelity Datasets

We can't rely on humans to read a trillion tokens. Instead, we use Natural Language Processing (NLP) to automate the heavy lifting. NLP tools can perform sentiment analysis to flag toxic content or use document summarization to identify redundant articles that should be pruned from the corpus.

Another powerful tool is Self-Supervised Learning (SSL). By using models like SimCLR, teams can extract embeddings from raw data without needing manual labels. These embeddings act like a "fingerprint" for the data. If two pieces of text have nearly identical fingerprints, they are redundant. By calculating a similarity matrix, curators can automatically delete duplicates and keep only the most diverse examples, which drastically reduces training time and costs.

When real-world data is scarce or too biased, we turn to Synthetic Data Generation. Using tools like NVIDIA NeMo Curator, developers can use an existing LLM to create diverse variants of data based on specific prompt templates. These synthetic records are then passed through a reward model that scores them for quality. If the synthetic data is high-quality and diverse, it fills the gaps in the real-world dataset, effectively "balancing" the corpus to reduce bias.

Fighting Bias Amplification in the Pipeline

Bias doesn't just enter the system during collection; it can be amplified during the curation process itself. If your automated filter is too aggressive, it might accidentally delete minority perspectives because they look like "outliers" to an algorithm. To fight this, the industry is moving toward a hybrid operation: machines do the initial massive screening, and humans provide the final confirmation for critical edge cases.

Active learning is another safeguard. Instead of a static dataset, the system identifies "uncertain' cases during the model's inference phase. These flagged examples are then sent back to human experts for curation and added to the training set. This creates a feedback loop where the model literally tells the curators, "I don't understand this part of the world," and the curators provide the specific data needed to fix the gap.

Integrating Curation into the AI Lifecycle

Curation isn't a one-time event; it's a lifecycle. It starts with identifying the source (whether it's sensor outputs in computer vision or web crawls for LLMs) and ends with storing the data in a warehouse with clear lineage documentation. Knowing exactly where a piece of data came from and how it was transformed is vital for auditability and legal compliance.

For those working with sensitive data, Federated Learning is a game-changer. It allows multiple parties to collaborate on a project without actually sharing the raw data. The curation happens locally at the edge, and only the learned model weights are shared. This preserves privacy while still allowing the model to learn from a diverse, global dataset.

Does data curation actually reduce training time?

Yes, significantly. By removing redundant data and low-quality noise through embedding-based selection and deduplication, you reduce the total number of tokens the model needs to process. Higher-quality data also leads to faster convergence, meaning the model reaches its peak performance in fewer training epochs.

Can synthetic data actually introduce more bias?

It can if you aren't careful. If the model generating the synthetic data is already biased, it will produce biased synthetic records. The key is using a "reward model" and prompt templates that explicitly demand diversity and accuracy, followed by human verification of a sample of the generated data.

What is the difference between data cleaning and data curation?

Cleaning is a subset of curation. Cleaning focuses on fixing errors (typos, missing values, formatting). Curation is a broader strategic process that includes deciding what data to collect, how to organize it, how to label it, and how to manage its lifecycle to meet a specific goal, such as reducing bias in an LLM.

How do I handle PII during the curation process?

Use automated PII detection tools that leverage named entity recognition (NER) to identify and redact emails, phone numbers, and addresses. The best practice is to replace this sensitive info with generic tokens (e.g., [EMAIL_ADDRESS]) so the model learns the structure of the data without memorizing the private info.

Why is human-in-the-loop still necessary?

Algorithms are great at patterns but terrible at nuance. A machine might flag a controversial but factual historical account as "toxic" because of the words used. Human experts provide the context necessary to distinguish between harmful content and complex, valuable information, ensuring the model doesn't become overly sanitized and bland.