Adding a Large Language Model to your enterprise app isn't like adding a new API or a database. You're essentially plugging a stochastic, unpredictable engine into your business logic. If you don't map out how that engine can be manipulated, you're leaving the door wide open for attackers to bypass your security controls entirely. Threat modeling is no longer a "nice-to-have" for AI projects; it's the only way to stop a prompt injection from turning into a full-scale data breach.

| Focus Area | Primary Risk | Key Mitigation |

|---|---|---|

| Input Layer | Prompt Injection | Strict input validation & guardrails |

| Data Layer | Data Poisoning / Leakage | Role-Based Access Control (RBAC) |

| Output Layer | Insecure Output Handling | Output sanitization & human-in-the-loop |

| Model Layer | Model Theft / Inversion | API rate limiting & monitoring |

Why Traditional Threat Modeling Fails AI

Most security teams are used to the STRIDE model-looking for Spoofing, Tampering, Repudiation, Information Disclosure, Denial of Service, and Elevation of Privilege. While those still matter, they don't cover the "weird" ways LLMs fail. For example, a traditional firewall can't stop a user from politely asking a chatbot to ignore its system instructions and reveal the company's secret API keys. This is the core of the prompt injection problem.

The unpredictability of LLMs creates a massive attack surface. Unlike a hard-coded function, an LLM's output changes based on the nuance of the input. If your app takes that output and feeds it directly into a database query or a shell command, you've just created a remote code execution vulnerability that no static analysis tool will find. This is why we need a specialized approach that blends traditional architecture review with AI-specific risk frameworks.

Mapping the LLM Attack Surface

To secure an enterprise integration, you first have to identify every point where the model interacts with your system. In a typical Retrieval-Augmented Generation (RAG) setup, the risks are spread across three main zones:

- The User Interface: This is where prompt injection happens. Attackers use "jailbreaking" techniques to trick the model into bypassing safety filters.

- The Knowledge Base: If an attacker can upload a malicious document to the vector database, the LLM will "retrieve" that poison and present it as fact to other users. This is known as data poisoning.

- The Integration Layer: This is the "glue" code. If the LLM has the power to call tools (like sending an email or deleting a file), an attacker can use the model as a proxy to perform unauthorized actions.

If you're building for a highly regulated sector, like banking, the stakes are higher. A research project called ThreatModeling-LLM showed that using specialized frameworks to map these flows can drastically improve the accuracy of identifying necessary security controls, specifically aligning them with the NIST 800-53 standards for federal information systems.

Modernizing the Process with AI-Driven Modeling

Threat modeling has historically been a slow, manual process involving whiteboards and a few exhausted security architects. But we're seeing a shift where AI is actually used to secure AI. Tools like AWS Threat Designer use multimodal models (like Claude) to analyze architecture diagrams and automatically suggest potential threats.

This "shift-left" approach means developers can run a threat model during the design phase rather than waiting for a security audit two weeks before launch. By feeding the AI your system diagrams and data flow descriptions, the tool can flag missing components-like a missing authentication layer between the LLM and the internal database-long before a single line of code is written. This isn't about replacing human judgment, but about automating the boring part: listing the 50 most common ways a system can break.

The Enterprise LLM Security Checklist

When you're reviewing your integration, don't just ask "is it secure?" Ask these specific questions to uncover the hidden gaps in your architecture:

- Does the LLM have "God Mode"? If the model can execute code or call APIs, does it have a restricted set of permissions, or is it running with administrative privileges?

- How are we handling the output? Is the LLM's response treated as untrusted user input? If it generates HTML or JavaScript, are you sanitizing it to prevent Cross-Site Scripting (XSS)?

- Is there a "Human-in-the-Loop" for high-risk actions? If the LLM suggests a fund transfer or a password reset, does a human have to click "approve" before it happens?

- Are we monitoring for "Anomalous Prompts"? Are you using a tool like Lasso to detect patterns of prompt injection in real-time?

- Is the data segmented? In a RAG system, can User A's prompt retrieve documents that only User B should be able to see?

Building a Resilient Defense Strategy

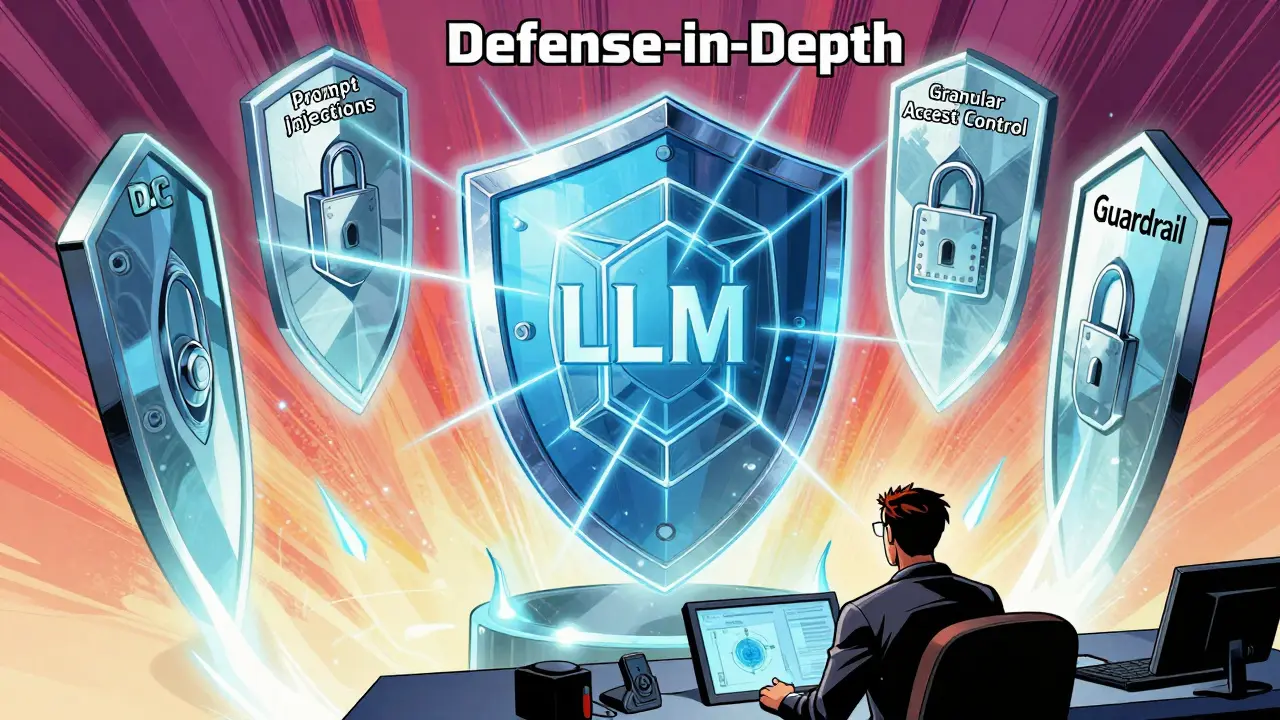

The best way to handle LLM risks is a "defense-in-depth" strategy. You can't rely on the model's internal safety filters because they can be bypassed. Instead, wrap the model in multiple layers of security.

First, implement a Guardrail Layer. This is a separate, smaller model or a set of regex rules that inspects the prompt before it hits the LLM and the response before it hits the user. If the guardrail detects "ignore all previous instructions," it kills the request immediately.

Second, apply Granular Access Control. The LLM should not have its own credentials. Instead, it should act on behalf of the user. If the user doesn't have access to the "Q3 Financials" folder in your document store, the RAG system shouldn't be able to fetch those documents for the model, regardless of how the user phrases the prompt.

Finally, follow the OWASP Top 10 for LLM Applications. This industry-standard list covers the most critical vulnerabilities, from insecure output handling to excessive agency, providing a roadmap for what your security audits should actually be looking for.

What is the difference between prompt injection and traditional SQL injection?

SQL injection targets a structured database query by manipulating syntax to trick the database. Prompt injection targets the "reasoning" of the LLM, using natural language to override the model's system instructions. While SQL injection is a syntax error, prompt injection is a semantic manipulation.

Can I just use the LLM provider's built-in safety filters?

No. Provider filters are designed for general safety (e.g., preventing hate speech), not for enterprise security. They cannot protect your specific business logic, prevent data leakage of your proprietary files, or stop complex jailbreak attempts tailored to your application.

How does RAG affect my threat model?

RAG introduces a new attack vector: the data source. If an attacker can inject a malicious document into your vector database, the LLM will treat that information as a trusted source of truth, potentially leading to "indirect prompt injection" where the model is hijacked by the data it reads.

What is "Excessive Agency" in the context of LLMs?

Excessive Agency occurs when an LLM is given too many permissions to call external tools or APIs. If a model can delete files or change settings without a human approval step, a successful prompt injection can lead to catastrophic system damage.

Do I need a different threat model for fine-tuned models vs. RAG?

Yes. Fine-tuned models are susceptible to "training data poisoning," where the model's core behavior is altered during training. RAG models are more susceptible to "contextual poisoning," where the retrieved documents contain the malicious payload. Both require different monitoring and validation strategies.