Most companies are currently treating AI like a wild west experiment. They pump millions into LLMs, but then panic when a chatbot hallucinates a fake legal precedent or leaks sensitive customer data. The reality is that there is a massive gap between spending and actual production outcomes. If you don't have a way to steer the ship, you aren't innovating-you're just gambling with your brand's reputation. Implementing a generative AI governance strategy isn't about slowing down; it's about building a brake system that actually allows you to drive faster.

| Focus Area | Key Goal | Typical Outcome |

|---|---|---|

| Councils | Cross-functional oversight | Higher compliance, slower speed |

| Policies | Standardized rules | Consistency across teams |

| Accountability | Direct ownership | 33% faster deployment |

Moving from "Governance Theater" to Real Control

There is a dangerous trend called "governance theater." This is when a company creates a fancy slide deck and a monthly meeting but doesn't actually change how the AI is built or monitored. As Dr. Marcus Wong from the TechPolicy Institute pointed out, this creates an illusion of safety while the real risks-like bias and security holes-remain untouched. To avoid this, you need a model that actually connects to your technical workflow.

Effective governance relies on Responsible AI is the practice of designing, deploying, and evolving AI systems that are ethical, transparent, and accountable . This isn't just a moral choice; it's a business one. According to PwC's 2025 survey, organizations with mature governance see a 23% higher ROI on their AI investments because they spend less time fixing disasters and more time scaling what works.

The Three Primary Governance Models

Depending on your company size and risk appetite, you'll likely land in one of these three camps. Each has a very different impact on your velocity.

The Council-Based Approach

This is the most common starting point. You build a committee with people from legal, data science, and business units. It's great for catching regulatory red flags, but it's a notorious bottleneck. In fact, 62% of companies report that these reviews add up to three weeks to their deployment timelines. It's a safety net, but it can feel like a chokehold for developers.

The Policy-Driven Framework

Here, you establish a set of "laws" for the AI: what data can be used, how privacy is handled, and what the acceptable accuracy threshold is. While this provides consistency, the risk is rigidity. Generative AI evolves so fast that a policy written in January is often obsolete by March. If your rules are too static, your team will either stop innovating or start bypassing the rules entirely.

The Accountability-Focused Model

This is where the most advanced organizations are heading. Instead of a committee approving a project, a specific owner is assigned accountability for the outcome. Governance is baked directly into the design phase. Because there's no longer a "waiting room" for approval, these organizations see 33% faster deployment cycles. It shifts the focus from "Did we get permission?" to "Is this system performing safely in real-time?"

The Technical Pillars of a Robust Framework

You can't govern what you can't measure. A real framework needs to be built on a technical foundation, often using the NIST AI Risk Management Framework is a voluntary guideline designed to manage the risks of AI systems to protect individuals, organizations, and society . About 68% of organizations use this as their primary reference. To make it work, you need five specific components:

- Transparency and Explainability: You need to know why a model gave a specific answer. In healthcare, for example, 87% of organizations require execution graphs to validate AI decisions.

- Security and Risk Management: This involves Red Teaming is the process of intentionally attacking an AI system to find vulnerabilities before malicious actors do . Financial institutions are obsessed with this, with 92% making it mandatory.

- Ethical Bias Mitigation: Actively hunting for bias doesn't just feel good-it works. Mature governance has led to an average 37% reduction in bias incidents.

- Continuous Monitoring: AI isn't "set and forget." You need real-time observability to catch model drift as it happens.

- Policy Compliance: Ensuring the system aligns with laws like the EU AI Act is the first comprehensive legal framework for AI in the world, imposing strict requirements on high-risk AI systems .

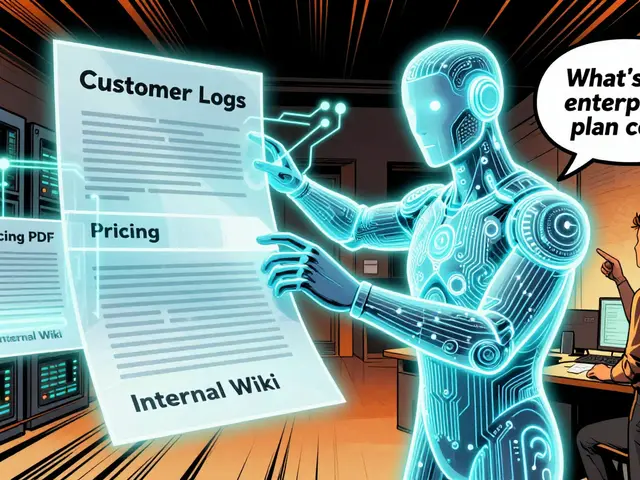

Solving the "Bring Your Own AI" Problem

One of the biggest headaches for any AI leader today is Shadow AI. Microsoft found that 78% of employees bring their own AI tools to work without telling IT. If you simply ban these tools, you don't stop the behavior-you just hide it. Research shows that banning AI can actually increase shadow usage from 22% up to 67%.

The solution isn't a ban; it's a sandbox. By providing secure, company-sanctioned environments where employees can experiment, some organizations have boosted compliance from a measly 31% to a robust 89%. Give them a safe place to play, and they'll stop using their personal accounts for company data.

Implementing Governance: A Step-by-Step Guide

If you're starting from scratch, don't try to boil the ocean. Follow this phased approach to avoid wasting thousands of engineering hours.

- The Readiness Review (10-15 Days): Treat this like a cloud migration assessment. Audit your current tools, identify who is using what, and map out your data flows.

- Goal Setting to Avoid "Lock-in": Define what you want the AI to achieve long-term. Organizations that skip this step face 3.2x higher costs when they eventually have to modify their systems because they built into a dead-end architecture.

- Technical Setup (6-8 Weeks): Implement your monitoring tools and observability layers. This is where you integrate the ISO/IEC 42001 is an international standard that specifies requirements for an AI management system standards.

- Training and Upskilling (3-4 Weeks): Your stakeholders need to understand risk, not just prompts. Effective programs usually require 25-35 hours of instruction.

The Future: From Static Rules to Agentic Guardrails

We are moving away from simple chatbots and toward Agentic AI is AI systems that can autonomously plan and execute complex tasks to achieve a goal, rather than just generating text . This changes everything. A chatbot that hallucinates a fact is a nuisance; an autonomous agent that hallucinates a financial transaction is a catastrophe.

By the end of 2025, we expect nearly half of large enterprises to move toward "dynamic guardrails." These are AI-driven controls that adjust their strictness based on the real-time risk of the task. For example, an agent might have full autonomy to summarize a meeting but require human-in-the-loop approval before sending an email to a client. Governance is shifting from a "no" machine to a growth accelerator.

What is the biggest risk of using a council-based governance model?

The primary risk is the creation of operational bottlenecks. Because council-based models require cross-functional approval from legal, compliance, and technical teams, they often add significant delays to deployment-sometimes between 14 to 21 days per release-which can stifle innovation in fast-moving markets.

How does an accountability-focused model differ from a policy-driven one?

A policy-driven model relies on a set of pre-defined rules that everyone must follow, which can become rigid and outdated. An accountability-focused model embeds governance into the design process and assigns clear ownership of the outcome to a specific person, allowing for faster pivots and a 33% increase in deployment speed.

Why is "Shadow AI" such a problem for governance?

Shadow AI occurs when employees use personal AI tools for work tasks without company oversight. This creates massive security gaps and data privacy leaks. Because 78% of employees have done this, companies can't track what data is leaving the building or ensure the outputs are compliant with industry regulations.

What is the NIST AI Risk Management Framework?

The NIST AI RMF is a voluntary technical standard used by roughly 68% of organizations to manage AI risks. It provides a structured way to map, measure, and manage the risks associated with AI systems, ensuring they are reliable, safe, and fair.

How does governance improve the ROI of AI projects?

Mature governance reduces the cost of failure. By catching bias, security flaws, and regulatory issues early, companies avoid expensive lawsuits and redesigns. This leads to an average 23% higher ROI as initiatives move from experimental prototypes to scalable business assets more efficiently.

Sandi Johnson

April 22, 2026 AT 14:42Oh yeah, because nothing says "fast-paced innovation" like a cross-functional committee meeting every Tuesday to decide if a prompt is too spicy. Truly a masterclass in efficiency here.

Ronnie Kaye

April 22, 2026 AT 17:59Totally agree with the sandbox idea! It's honestly hilarious that we think banning stuff works in the 21st century. Just give the people the tools and watch the magic happen, or at least watch them stop using their personal Gmail for company secrets!

Michael Gradwell

April 23, 2026 AT 13:11most of these companies are just pretending to care about ethics while they chase the bag. the real governance is just whoever has the loudest voice in the room. typical corporate nonsense

Priyank Panchal

April 24, 2026 AT 16:42The mention of

Flannery Smail

April 24, 2026 AT 19:31I don't know, the accountability model sounds like a great way to find one person to fire when the AI inevitably deletes the production database. Why change a system that's already perfectly broken?

Rakesh Kumar

April 25, 2026 AT 05:41Wait, this is absolutely mind-blowing! The idea that we can actually steer this beast instead of just hoping for the best is just incredible! I am genuinely vibrating with excitement over these dynamic guardrails! Imagine a world where the AI knows when it's being too risky and just asks for help! It's like a digital safety net for the entire human race! This is the revolution we've been waiting for, and I can't believe we're actually seeing the blueprint for it right here! Everything changes now!

Bill Castanier

April 25, 2026 AT 07:35Good points. Sandboxes work.

Emmanuel Sadi

April 25, 2026 AT 21:02Imagine thinking that a 3-4 week training program is enough to fix the systemic incompetence of an entire workforce. Cute. The ROI stats are probably just massaged by consultants who get paid to make the disaster look like a strategy. Truly impressive how we keep pretending a few slides and a NIST framework will stop a determined employee from leaking data into a public LLM.

Tony Smith

April 27, 2026 AT 16:50It is truly a marvel of modern corporate architecture to witness the seamless integration of bureaucratic stagnation and technological advancement. I am utterly delighted by the suggestion that we might simply assign a single soul to be the sacrificial lamb for AI failures. How wonderfully progressive of us to streamline the process of finding a scapegoat while maintaining a veneer of formal oversight. One must simply admire the audacity of suggesting that a 'sandbox' will curb the innate human desire to bypass every single security protocol ever devised by man. Truly, a beacon of hope for all of us navigating this digital wilderness with such refined grace.