Refactoring a single file is easy. Refactoring twelve files that talk to each other? That’s where most developers break things. You change one function name, and suddenly your payment module crashes because it relied on a utility you didn’t realize was shared. This is the nightmare of legacy codebases.

Prompt chaining is a technique that breaks complex coding tasks into sequential, interconnected prompts. When applied to multi-file refactors, it stops AI from guessing context across unrelated files. Instead of asking an LLM to "fix this whole project," you guide it through a structured conversation: analyze dependencies, plan changes, execute step-by-step, and verify.

This isn't just about saving time. According to Leanware's 2025 study, prompt chaining reduces error rates in multi-file refactors from 68% (with single prompts) down to 22%. It also cuts completion time by 37% compared to manual work. If you’re managing a repository with more than five interdependent files, skipping this approach is risky.

The Core Problem: Why Single Prompts Fail

Large Language Models (LLMs) have context windows, but they don’t have memory in the way humans do. When you paste ten files into a chat interface and ask for a refactor, the model treats them as a bag of text. It might miss subtle dependencies between File A and File C because File B distracted its attention.

Siemens’ Digital Industries division found that single-prompt refactoring succeeds only 32% of the time when changes span more than three files. The issue isn’t intelligence; it’s focus. Without structure, the AI hallucinates connections or ignores critical constraints like backward compatibility.

Prompt chaining solves this by forcing the AI to acknowledge relationships before changing code. It turns a chaotic dump of requirements into a disciplined workflow.

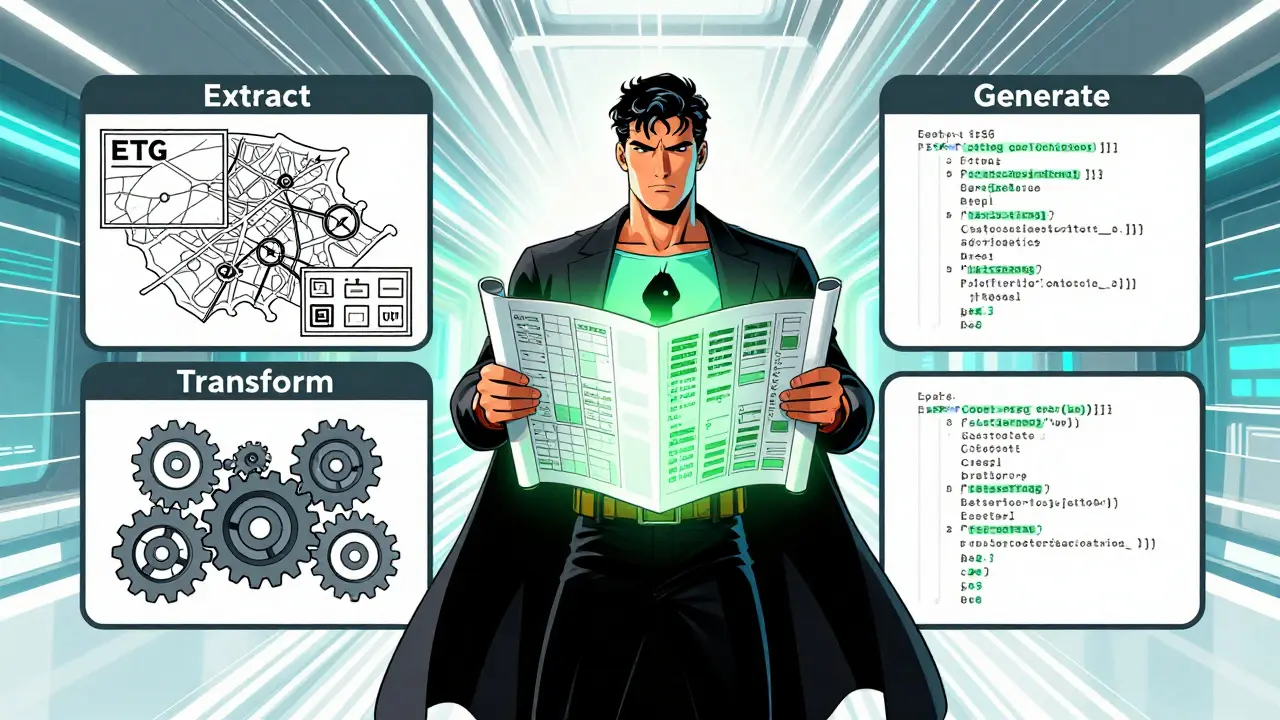

The ETG Pattern: Extract, Transform, Generate

Effective prompt chaining follows the ETG pattern (Extract, Transform, Generate) documented in the 'Interactive Book of Prompting'. Here’s how it works in practice:

- Extract: Ask the AI to map dependencies. For example: "Analyze these 12 files to identify all MVC pattern implementations, component relationships, and dependency chains." Do not ask for code yet. Just get a map.

- Transform: Create a plan with constraints. Prompt: "Create a refactoring plan that replaces all hardcoded secrets with environment variables while maintaining backward compatibility across these interconnected files."

- Generate: Produce actual code changes with verification steps. Limit each generation step to 3-5 files that share direct dependencies.

DataCamp recommends keeping context windows tight-between 4,096 and 8,192 tokens per chain segment. Overloading the context leads to dropped details. Stick to small clusters of related files.

Choosing Your Framework: LangChain vs. Autogen

You don’t need to build prompt chains from scratch. Frameworks handle the heavy lifting of state management and context switching. As of late 2025, two tools dominate this space:

| Feature | LangChain | Autogen |

|---|---|---|

| Cross-file Reference Tracking (TypeScript) | 92% | 85% |

| Accuracy in Python Ecosystems | 81% | 89% |

| Documentation Quality | 4.6/5.0 | 3.8/5.0 |

| Community Support | Strong (Discord) | Moderate |

If you work primarily in TypeScript or JavaScript, LangChain is the safer bet. Its recent 'FileGraph' technology (v0.1.16, January 2026) automatically maps dependencies with 94% accuracy. For Python projects, Autogen offers slightly better precision.

Note that both frameworks require 15-25% more computational resources than single-prompt approaches. If you’re running locally on limited hardware, factor this into your decision.

Step-by-Step Implementation Guide

Getting started takes 2-3 weeks if you’re familiar with LLM prompting. Follow these four critical steps:

- Create a Dependency Map: Use tools like CodeQL to visualize which files call which functions. Don’t rely on the AI to discover this alone. Feed it a pre-built graph.

- Design Chain Templates: Write reusable prompts for each phase (Extract, Transform, Generate). Include specific constraints like "do not break public APIs" or "maintain existing test coverage."

- Implement Verification Steps: Between every chain segment, run unit tests. Dr. Sarah Chen from Microsoft warns that skipping verification increases failure probability by 300%. Make testing mandatory.

- Integrate with Version Control: Each step should output a Git diff. Review the diff before committing. Never let the AI commit directly. Dr. Alexei Petrov calls version control integration "non-negotiable" for safety.

A real-world example: A team used LangChain to refactor 47 React files from class components to functional components. They saved three weeks of work by having the chain output diffs for each file pair before proceeding. The key was incremental progress, not big-bang changes.

Pitfalls to Avoid

Prompt chaining isn’t magic. It has limits. Watch out for these common traps:

- Circular Dependencies: Chains struggle with loops involving more than seven files. In these cases, generate temporary stubs to break the cycle temporarily.

- Legacy Code Without Docs: Success rates drop to 41% in undocumented COBOL or older Java systems. Add documentation layers first if possible.

- Third-Party Library Blind Spots: AI often misses external dependencies. One developer reported a broken payment module because the chain renamed a utility function used by a third-party library. Always audit imports manually.

- Superficial Changes: Dr. Margaret Lin from Stanford warns that 28% of chained refactorings required significant manual correction within six months due to missing deeper architectural issues. Use AI for syntax and structure, not business logic redesign.

Best Practices for Safe Refactoring

To maximize success, adopt "test-driven chaining." This means each refactoring step generates and verifies unit tests before moving forward. GitHub’s Awesome Prompt Engineering repository shows this pattern in 73% of successful case studies.

Also, limit scope. Don’t try to refactor an entire module in one chain. Break it into logical units: authentication, data handling, UI components. Treat each unit as a separate chain instance.

Finally, involve human review at every stage. The EU’s 2025 AI Development Guidelines require human verification for multi-file refactorings exceeding ten interconnected files in critical infrastructure. Even outside regulated industries, human oversight prevents costly errors.

What is prompt chaining in code refactoring?

Prompt chaining is a method where multiple prompts are linked sequentially to handle complex tasks. In refactoring, it breaks down multi-file changes into smaller, manageable steps: analyzing dependencies, planning transformations, generating code, and verifying results. This prevents context loss and reduces errors.

Which framework is best for multi-file refactors?

For TypeScript and JavaScript projects, LangChain is currently the top choice due to its high accuracy in tracking cross-file references (92%). For Python ecosystems, Autogen performs slightly better (89% accuracy). Both offer robust support for structured workflows.

Why do single prompts fail for large refactors?

Single prompts overwhelm the LLM’s context window and lack structural guidance. Studies show only 32% success rate for changes spanning more than three files. The AI may miss dependencies or introduce inconsistencies because it processes files as isolated text blocks rather than connected systems.

How does version control integrate with prompt chaining?

Each step in the chain should produce a Git diff that can be reviewed before committing. This allows developers to catch errors early and revert changes if needed. Direct commits from AI are discouraged due to the risk of unintended side effects.

What are the risks of automated refactoring?

Risks include superficial changes that ignore deeper architectural issues, missed third-party dependencies, and increased technical debt. Research indicates 28% of chained refactorings need manual correction within six months. Human verification remains essential.