Large language models aren’t magic. They don’t "think" like humans. They don’t have memories, feelings, or intentions. But they can write essays, answer questions, and even crack jokes - often better than most people expect. So how do they do it? Let’s cut through the hype and explain exactly what these models are, how they work, and why they’re suddenly everywhere.

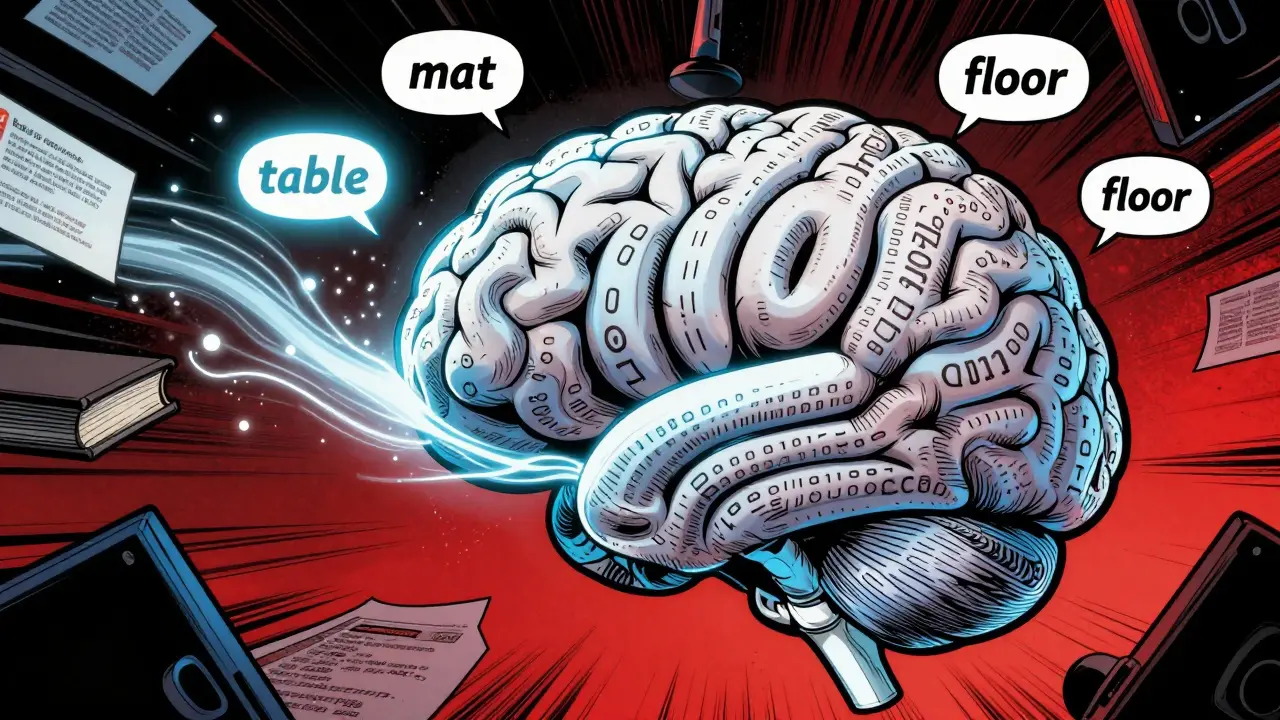

They Predict Words. That’s It.

At their core, large language models (LLMs) are supercharged word predictors. Imagine you’re reading a sentence: "The cat sat on the..." What’s the next word? Most people would guess "mat." An LLM does the same thing - but it’s seen billions of sentences like this, and it’s calculated the odds for every possible next word across every possible context. It doesn’t "know" the cat is on a mat. It just knows that in 97% of similar cases, "mat" came next. That’s it.This isn’t a dictionary lookup. It’s pattern recognition at scale. Every time you type a message, ask a question, or even write a grocery list, the model is silently running this prediction game - one word at a time. It guesses the most likely next token (a word or part of a word), then uses that guess as the new starting point to predict the next one. It keeps going until it stops.

How They Learn: Training on the Entire Internet (Almost)

LLMs don’t start with any knowledge. They begin as blank slates. Then, they’re fed massive amounts of text - books, Wikipedia, Reddit threads, news articles, code repositories, forum posts. Think of it as reading every public piece of writing ever made, over and over.During training, the model is shown a sentence like: "Paris is the capital of France." Then it’s asked: "Given the first four words, what’s the next?" It guesses. Maybe it says "Germany." Wrong. The system calculates the error, adjusts its internal settings, and tries again - billions of times. This process, called backpropagation, slowly tweaks the model’s internal weights until it gets better at predicting what comes next.

These adjustments are stored as parameters - numbers that control how the model connects ideas. Modern LLMs have billions or even trillions of them. For comparison, GPT-1 in 2018 had 117 million parameters. GPT-3, released just a few years later, had 175 billion. That’s not just more data - it’s more capacity to hold subtle patterns: how sarcasm works, how legal documents are structured, how poetry rhymes, even how people apologize.

The Transformer: The Engine Behind the Magic

What makes modern LLMs so powerful isn’t just size - it’s architecture. Almost all LLMs today use something called a transformer. Before transformers, AI models processed text one word at a time, like reading a book from left to right. That made it hard to understand relationships between distant parts of a sentence.Transformers changed that. Instead of reading word by word, they look at the whole sentence at once. They ask: "How does each word relate to every other word?" For example, in the sentence "The dog chased the ball because it was excited," the model figures out that "it" refers to "dog," not "ball." It does this using something called self-attention.

Self-attention works like a spotlight. For each word, it assigns a weight to every other word in the sentence - some get high attention ("dog" and "it"), others get low ("the" and "was"). These weights are learned during training. Think of them as internal notes the model writes to itself: "This word matters more than that one." The more layers the model has, the deeper these notes go. Early layers handle grammar. Later layers handle logic, tone, and even implied meaning.

How They "Remember" What They Read

You might wonder: if LLMs don’t store facts like a database, how do they answer questions about history, science, or pop culture? The answer lies in their hidden states - internal representations that change as text flows through the model.Imagine a story: "John met Lisa at the café. She ordered coffee. He remembered her favorite drink." An LLM doesn’t store "Lisa likes coffee" as a fact. Instead, as it reads each word, it updates a set of 12,288 numbers (in GPT-3) that represent the current context. These numbers are like a mental scratchpad. Earlier layers might note: "Lisa is a person." Later layers might link "she" to "Lisa" and "favorite drink" to "coffee." By the end, the model has built a rich internal map - not a database, but a dynamic, evolving understanding.

Researchers have tested this by probing models layer by layer. In one famous experiment, they fed a model a prompt: "The capital of Poland is..." The first 15 layers guessed random words. Then, at layer 16, it started guessing "Poland." At layer 20, it settled on "Warsaw" - and stayed there. This shows that early layers handle surface patterns, while deeper layers build complex, abstract connections.

Context Windows: How Much They Can "See" at Once

Early LLMs could only handle a few hundred words at a time. That meant they couldn’t summarize a 10-page report or remember what you said five messages ago.Today’s models have context windows measured in hundreds of thousands of tokens. Some can process entire novels, long code files, or multi-hour transcripts in one go. This isn’t just a technical upgrade - it changes what’s possible. You can now paste a 50,000-word research paper into a chat and ask, "Summarize the key findings," and the model will do it - because it can see the whole thing.

But there’s a catch: longer context windows require more memory and computing power. That’s why not all LLMs have them. Smaller models, designed for phones or low-power devices, often have context windows under 4,000 tokens. Larger ones, like those used in enterprise tools, can go beyond 100,000.

What Happens After Training? Fine-Tuning and Human Feedback

Training on the internet gives LLMs broad knowledge - but not always useful or safe responses. A model might generate accurate information, but in a rude tone. Or it might hallucinate facts that sound plausible but are wrong.To fix this, companies use two extra steps: fine-tuning and reinforcement learning from human feedback (RLHF). Fine-tuning means training the model on a smaller, curated dataset - like customer service chats or medical Q&A pairs. This helps it adapt to specific tasks.

RLHF takes it further. Humans rank different responses to the same prompt: "Which answer is more helpful?" "Which one is safer?" The model learns from these rankings. It doesn’t memorize answers - it learns to prefer responses that feel more human, accurate, and ethical. This is why modern LLMs don’t say "I don’t know" as often - they’ve been trained to try harder.

Small vs. Large Models: Why Size Matters

Not all LLMs are created equal. Some are huge - running on server farms with hundreds of GPUs. Others are tiny, designed to run on your phone or smart speaker.Large models (7B+ parameters) excel at complex reasoning, creative writing, and multi-step problem solving. They’re used in chatbots, research assistants, and content generators.

Small models (under 1B parameters) are faster, cheaper, and use less power. They’re great for simple tasks: answering FAQs, translating short phrases, or powering voice assistants. They’re not as smart, but they’re smart enough for everyday use.

The trade-off is simple: bigger models = more capability, but higher cost. Smaller models = less capability, but easier to deploy. The right choice depends on what you need - not what’s flashy.

Can They Access Real-Time Information?

LLMs don’t have live internet access by default. They’re trained on data frozen in time - usually up to 2023 or 2024. That means they can’t tell you today’s stock price or the latest sports score.But there’s a workaround: Retrieval-Augmented Generation, or RAG. With RAG, an LLM connects to a live database - like weather services, company documents, or product catalogs. When you ask a question, the system first searches the database, pulls in the latest info, and feeds it into the model’s context window. Then the model generates a response using that fresh data.

This is how tools like Microsoft Copilot or Notion AI give you accurate, up-to-date answers. The model itself didn’t learn the new info - it just used it when needed.

What LLMs Can and Can’t Do

They’re powerful - but not perfect. Here’s what they’re good at:- Writing clear, well-structured text

- Summarizing long documents

- Answering factual questions (if trained on the data)

- Translating languages

- Generating code snippets

- Explaining complex topics in simple terms

Here’s what they’re bad at:

- Knowing what’s true right now (unless connected to live data)

- Understanding emotions or intentions

- Doing math reliably (they guess patterns, not calculations)

- Being creative in the human sense (they remix, they don’t invent)

- Having ethics or morals - they reflect what they were trained on

Think of them as incredibly fast, highly trained parrots - they repeat patterns they’ve seen, but they don’t understand why.

| Component | What It Does | Example |

|---|---|---|

| Parameters | Internal weights that store learned patterns | GPT-3: 175 billion parameters |

| Transformer | Architecture that processes all words at once | Used in GPT, Llama, Claude |

| Self-Attention | Figures out how words relate to each other | Links "she" to "Lisa" in a sentence |

| Context Window | How much text the model can see at once | 32,768 tokens in newer models |

| Tokenization | Breaks text into chunks the model can process | "Unbelievable" → "un", "believe", "able" |

| RLHF | Uses human feedback to improve safety and usefulness | Helps models avoid harmful responses |

Final Thought: They’re Tools, Not Minds

Large language models are one of the most powerful tools we’ve built in decades. But they’re not conscious. They don’t understand. They don’t believe. They just predict.That’s why they’re so useful - and so dangerous. They can write a perfect essay, but they can also write a convincing lie. They can help a student learn, or they can give bad advice that sounds authoritative. The real power isn’t in the model. It’s in how you use it.

Are large language models the same as AI?

No. AI is the broad field of machines doing smart things - like recognizing faces, driving cars, or playing chess. LLMs are a specific type of AI designed for language. Think of AI as the whole toolbox, and LLMs as one very powerful hammer in it.

Do LLMs have memory?

Not like humans. They don’t store memories between conversations. Each new prompt starts fresh. Some apps simulate memory by feeding past messages back into the model - but the model itself doesn’t remember. It’s like reading a new book every time, even if you’ve read it before.

Can LLMs make mistakes?

Yes - and often. They’re not databases. They guess. If the training data has a mistake, they’ll repeat it. If the prompt is unclear, they’ll make up an answer that sounds right. That’s called hallucination. Always fact-check critical info.

Why do some LLMs cost money while others are free?

It’s about scale. Free models are usually smaller, slower, or have limits on usage. Paid models are larger, faster, and can handle more complex tasks. Running a 70-billion-parameter model costs thousands of dollars per day in electricity and hardware. Someone has to pay for it.

Can I run an LLM on my own computer?

Yes - if you have a powerful enough system. Smaller models (under 7 billion parameters) can run on high-end PCs or laptops with a good GPU. But models like GPT-4 or Claude 3 need server-grade hardware. For most people, using them online is easier and cheaper.

Next Steps

If you’re curious about how LLMs are changing real-world work, look into how they’re used in customer support, legal research, or software development. Many professionals now use them as co-pilots - not replacements. The key is learning how to ask better questions. The model is only as good as the prompt you give it.Start experimenting. Ask it to explain something you already know. See how it responds. Then tweak your question. You’ll learn faster than reading any guide.

Nathaniel Petrovick

March 15, 2026 AT 20:01This is actually one of the clearest explanations I’ve read. I’ve been trying to explain LLMs to my coworkers for months and I always end up saying "it’s like a supercharged autocomplete" but this breaks it down way better. The part about self-attention and how it weighs relationships between words? That clicked for me. I used to think they were just memorizing stuff, but now I see it’s more like pattern weaving. Also, the parrot analogy? Spot on. They’re not thinking-they’re riffing on a million songs they’ve heard.

Honey Jonson

March 16, 2026 AT 16:18omg yes this is so true i always thought they were kinda psychic but nope its just math and luck and a ton of data. i use them all the time for drafting emails and they always get the tone right even when i cant explain what i want. its like having a super chill intern who never gets tired but also forgets half the time what you asked for lol

Sally McElroy

March 17, 2026 AT 08:12Let me be clear: this is dangerously misleading. You call them "predictors," but that implies a neutral, mechanical process. It’s not. These models are trained on the entire cesspool of the internet-racist rants, conspiracy theories, clickbait, toxic fandoms, and hate forums. They don’t "guess" words. They absorb bias and replicate it with terrifying precision. And now you’re telling people to "just use them"? No. We need regulation. We need ethics boards. We need to stop pretending this is just "technology" when it’s cultural contamination wrapped in a shiny API.

Destiny Brumbaugh

March 18, 2026 AT 04:08US got the best LLMs because we built them on free speech and innovation not some European nanny state rules. China’s trying to copy us but their models are all censored and robotic. This tech is gonna change the world and we’re leading it. Also I’ve seen these models write better than my college professors and they got PhDs from Harvard. So yeah I’m using it for my homework and I don’t care if you think that’s wrong. Get over it.

Sara Escanciano

March 19, 2026 AT 20:44There’s a reason these models hallucinate. They’re trained on garbage. Wikipedia isn’t perfect. Reddit isn’t a source. News sites are biased. And now we’re letting this thing write medical advice, legal summaries, and school essays? It’s not a tool-it’s a liability. People are trusting this like it’s a priest. It’s not. It’s a statistical echo chamber with a keyboard. If you’re using this for anything important, you’re already failing. And no, "fact-checking" isn’t a fix. You can’t fact-check a lie if you don’t know it’s a lie in the first place.

Elmer Burgos

March 20, 2026 AT 23:12Love how this breaks it down without jargon. I’m a teacher and I’ve started using LLMs to help explain tough topics to my students. They love it. But I always tell them: "This isn’t your brain. It’s a mirror. What you put in, it reflects. What you don’t check, it might lie." I show them how to tweak prompts, compare answers, and spot when it’s just making stuff up. It’s not magic. It’s practice. And honestly? It’s teaching them how to think better-not worse. We just need to teach them how to use it right.