Enterprises aren’t just using one LLM anymore. They’re juggling three: commercial APIs like GPT-5 and Claude 4, open-source models like Llama 4 and Mistral 8x22B, and custom-built models fine-tuned for their own data. And if they’re not managing this mix intentionally, they’re losing money, risking compliance, or delivering poor results. The companies that win in 2026 don’t pick the flashiest model-they build a portfolio. This isn’t about tech hype. It’s about making smart, measurable choices across cost, control, accuracy, and risk.

Why a Single LLM Doesn’t Work Anymore

In 2023, many companies bet everything on one API model. GPT-4 was good enough for chatbots, summaries, and basic Q&A. But as use cases grew-from financial underwriting to medical triage to proprietary R&D-those same models started to crack. Data privacy violations spiked. Costs ballooned. Accuracy dropped when models were asked to understand industry jargon or internal processes. By 2025, 63% of companies with a single-model strategy had failed to hit their ROI targets within 18 months, according to DeepLearning.AI’s analysis. The truth is simple: one size doesn’t fit all. A customer service bot doesn’t need the same level of control as a model reviewing FDA submissions. A content generator can run on a public API. A risk assessment engine needs to live behind your firewall, trained on your confidential data. That’s why top enterprises now treat LLMs like a stock portfolio-diversifying across types to balance risk, return, and resilience.The Three Tiers of an LLM Portfolio

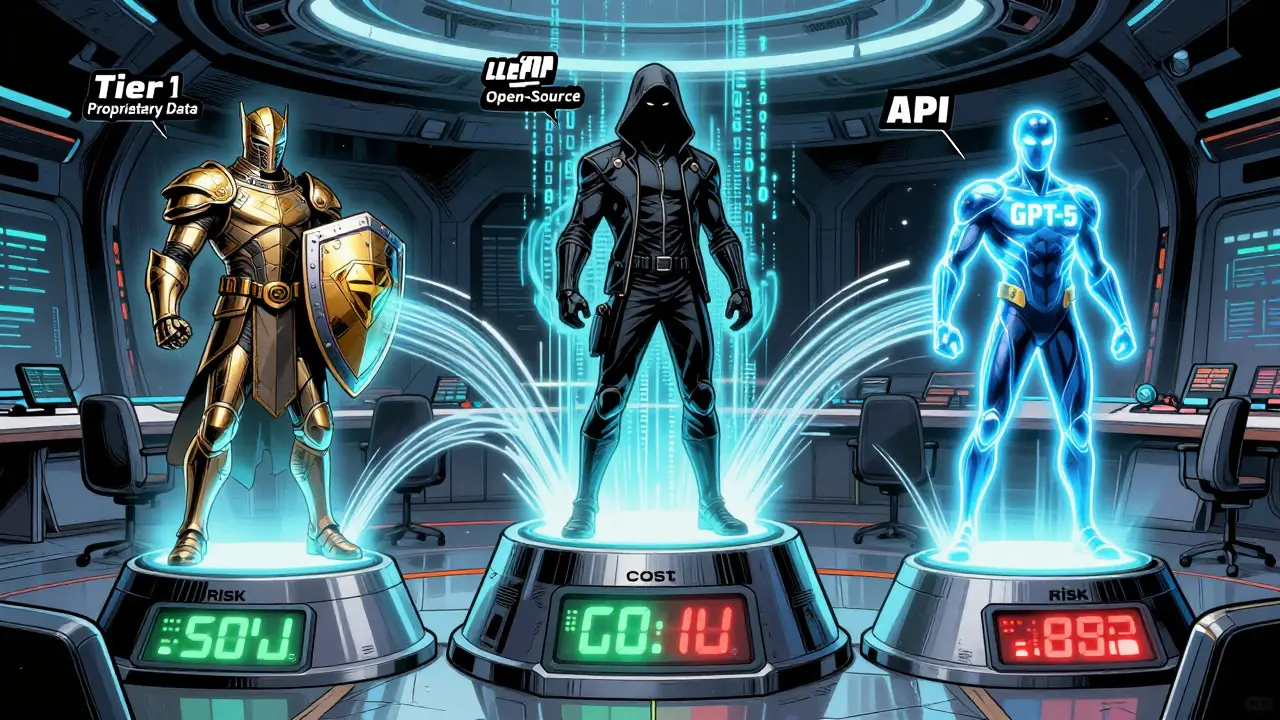

Successful organizations categorize their models into three clear tiers. Each tier has a purpose, a cost structure, and a set of trade-offs.- Tier 1: Custom Models - Built from scratch or heavily fine-tuned on your proprietary data. Used for mission-critical, high-risk tasks like clinical decision support, legal contract review, or patent analysis. These models deliver 18.7% higher accuracy on domain-specific tasks than generic APIs, according to Stanford’s HAI 2026 report. But they cost $417,000 on average to build and take 6-9 months to deploy. Only 82% of enterprises use them for proprietary research, and they’re rare outside regulated industries.

- Tier 2: Open-Source Models - Models like Llama 4 70B, Falcon 2, and Command R+ that you host yourself. They give you full control over data and updates. 94% of enterprises using them can enforce strict data governance. They cut operational costs by 23.4% over 12 months compared to APIs. But they demand engineering muscle: 40% more maintenance effort than API models. They dominate in healthcare (68% adoption) and financial underwriting (54% adoption).

- Tier 3: API-Based Models - GPT-5, Gemini 3, Claude 4. These are plug-and-play. Easy to integrate, fast to deploy, and great for general tasks. They’re used in 73% of customer service chatbots. But they come with big downsides: 78% of companies report compliance issues with sensitive data, and you can’t customize more than 37% of business logic. They’re the cheapest to start, but the most expensive to scale.

Costs, Performance, and Hidden Trade-Offs

Let’s break down the numbers. A company running a customer service bot with GPT-5 at $0.015 per 1,000 tokens might spend $42,000 a month. Switch to a hybrid: 60% Llama 4 13B hosted on Azure, 40% GPT-5 for edge cases. Result? Costs drop to $27,000. Accuracy improves by 9.3 points on domain-specific questions. That’s not luck-that’s portfolio management. But here’s what no one talks about: hidden costs. Open-source models look cheaper on paper. But factor in the engineering time to fine-tune, monitor, patch, and secure them, and the real cost climbs. A Llama 4 70B deployment on enterprise infrastructure costs $28,500/month. The GPT-5 API equivalent? $18,200. That $10,300 gap is covered by your ML engineers’ salaries. If you don’t have them, you’re just delaying a bigger problem. API models? They’re easy until you get audited. The EU AI Act and US Executive Order 14110 now require you to document every model you use. If your chatbot uses an API and handles personal health data? You’re non-compliant unless you’ve mapped every prompt, every data source, every output. Most companies didn’t plan for this.

When to Use Each Model Type

Don’t guess. Match the model to the task.- Use API models for: General content generation, multilingual support, simple Q&A, and low-risk customer interactions. If the output doesn’t impact compliance, safety, or revenue, an API is fine. GPT-5 scores 82.1% on MMLU-Pro benchmarks-perfect for broad knowledge.

- Use open-source models for: Healthcare triage, financial risk scoring, internal knowledge bases, and any task where data privacy is non-negotiable. If you’re processing patient records or loan applications, you need full control. Mistral 8x22B and Falcon 2 are leading here.

- Use custom models for: Proprietary research, legal document analysis, regulated manufacturing compliance, and anything that’s unique to your business. A pharma company fine-tuning a model on 20 years of clinical trial notes? That’s a custom model job. Accuracy gains here are real-12.3% above baseline on industry benchmarks.

How to Build a Real LLM Portfolio

You don’t need a team of 50. But you do need a process. Here’s how the top 22% of enterprises (those with “advanced” portfolio management, per Forrester) do it:- Assess high-value use cases - Focus on tasks that take up 15% or more of departmental time. Customer service, invoice processing, internal knowledge lookup. Don’t try to automate everything.

- Pilot with clean data - Collect 300-500 real examples of inputs and ideal outputs. Poor data kills even the best model. Use “data clean rooms” to merge consented sources without exposing raw PII.

- Evaluate beyond accuracy - Track latency (target under 2.3 seconds), cost per 1,000 tokens ($0.0085 for Tier 3, $0.018 for Tier 1), and compliance risk (keep it under 15/100). Use custom evaluators, not just benchmarks.

- Integrate with RAG - 92% of successful deployments use Retrieval-Augmented Generation. It keeps models grounded in your latest documents, reducing hallucinations and improving accuracy without retraining.

- Deploy with governance - Create a model registry. Log every model version, data source, and performance metric. Use tools like LangSmith or Maxim AI’s Portfolio Optimizer to track drift and cost over time.

- Review and retire - Models age. A model that worked in Q1 might be obsolete by Q3. Set up quarterly reviews. Retire underperformers. Don’t let your portfolio become a graveyard of dead models.

Tools and Skills You Actually Need

You can’t build this with just prompt engineers. You need:- ML engineers - 4+ years experience. They handle model hosting, fine-tuning, and infrastructure.

- Prompt engineers - Not just writers. They design inputs that trigger the right model in the right context.

- Domain specialists - A compliance officer for healthcare, a financial analyst for underwriting. They define what “good” looks like.

The Risks Nobody Talks About

Yes, portfolio management saves money. But it also creates new problems.- Model fragmentation - 79% of CIOs say they’re struggling with too many models, too many versions, no clear ownership. Solution: Create a Center of Excellence. Centralize knowledge, templates, and best practices across teams.

- Evaluation complexity - 63% of technical leads say measuring performance across model types is messy. Standardize your metrics. Use the same benchmarks (MMLU-Pro, HumanEval) for all models. Otherwise, you’re comparing apples to oranges.

- Technical debt from open-source - Emily Bender’s warning is real. 38% of enterprises had to rebuild open-source deployments because they skipped proper validation. Don’t assume “open-source = safe.” Test it like you’d test a custom model.

What’s Next in 2026

The field is moving fast. By Q3 2026, we’ll see standardized model interchange formats-so you can swap a Llama 4 model for a Falcon 2 without rewriting your entire pipeline. AI-powered assistants will auto-suggest model swaps based on cost, latency, and accuracy. Security frameworks will finally unify policies across API, open-source, and custom models. Gartner says LLM portfolio management is leaving the “Peak of Inflated Expectations” and heading toward the “Plateau of Productivity” by late 2026. That means it’s no longer optional. If you’re still using one model for everything, you’re not being innovative-you’re being risky.Frequently Asked Questions

What’s the biggest mistake companies make with LLM portfolios?

Starting with one model and scaling it to everything. Companies assume GPT-5 or Llama 4 can handle customer service, underwriting, and legal analysis. That leads to compliance violations, cost overruns, and poor accuracy. The fix is to match the model to the use case-not the other way around.

Can I use open-source models without a big engineering team?

Not reliably. Open-source models need hosting, monitoring, patching, and fine-tuning. If you don’t have ML engineers, you’ll end up with broken deployments, outdated versions, and security gaps. Start with APIs for low-risk tasks. Use open-source only when you have the team to support it.

How do I know if a custom model is worth the cost?

Ask: Does this task generate high value or carry high risk? If it’s legal, medical, financial, or proprietary R&D-and it’s done repeatedly-then yes. A custom model that improves accuracy by 12.3% on contract review can save millions in litigation. Track ROI over 12 months, not just development cost.

Is there a tool that automatically picks the best model for each task?

Yes-tools like LangChain’s Model Router 2.0 and Maxim AI’s Portfolio Optimizer now do this. They route prompts based on cost, latency, accuracy, and compliance rules you set. But they need good data and clear policies to work. You still have to define the rules.

How do I comply with the EU AI Act and US Executive Order 14110?

Document everything. Keep a registry of every model you use, what data it accesses, its accuracy score, and how often it’s evaluated. Use tools with built-in audit trails. If you’re using an API for sensitive data, you need to prove you’ve masked PII. Most companies fail here because they didn’t plan for documentation from day one.