Have you ever asked a large language model to format data or solve a complex logic puzzle, only to get a response that looks nothing like what you needed? You aren't alone. Zero-shot prompting-asking the model to do something without any prior examples-is great for casual chat, but it often falls short when precision matters. This is where Few-Shot Prompting comes in. It is a technique that provides 2-8 input-output examples before the actual task to demonstrate desired behavior patterns for large language models. By showing the model exactly what you want, you can boost accuracy by 15-40% compared to zero-shot methods, all without the cost of fine-tuning.

Why Few-Shot Prompting Works Better Than Zero-Shot

To understand why this strategy works, you need to look at how models like GPT-4 and Claude are built. These models are fundamentally pattern learners. They have ingested vast amounts of text, but they don't inherently know your specific business rules or formatting quirks. When you use zero-shot prompting, you are relying on the model's general training data, which might not align with your niche needs.

Few-shot prompting leverages In-Context Learning. Instead of modifying the model's parameters (which requires expensive compute resources), you temporarily adapt its behavior within the context window. You provide examples, and the model recognizes the pattern in those examples. It then applies similar reasoning to generate responses for new inputs. Think of it like teaching a new employee: instead of just handing them a manual (zero-shot), you show them three completed reports and say, "Do it like this." The result is significantly higher consistency and relevance.

Selecting the Right Examples: Quality Over Quantity

The biggest mistake people make with few-shot prompting is throwing too many examples at the model. More isn't always better. In fact, recent research has identified a phenomenon called the Few-Shot Dilemma, where excessive examples lead to diminished performance. This is known as over-prompting. If you clutter the context window with irrelevant or redundant examples, the model gets confused about which pattern to follow.

So, how do you choose the right ones? Start with representative examples from your knowledge base. Your examples should cover different scenarios and edge cases. For instance, if you are building a customer support bot, include examples of angry customers, happy customers, and technical queries. Avoid biased or misleading examples that could confuse the model's pattern recognition. A good rule of thumb is to start with 3-5 diverse examples. If performance dips, reduce the number. If it improves, keep adding until you hit diminishing returns.

Ordering also matters. Arrange your examples from simple to complex. This helps the model understand basic patterns first before tackling harder variations. This progression mimics how humans learn and helps the model generalize better rather than just memorizing the last example it saw.

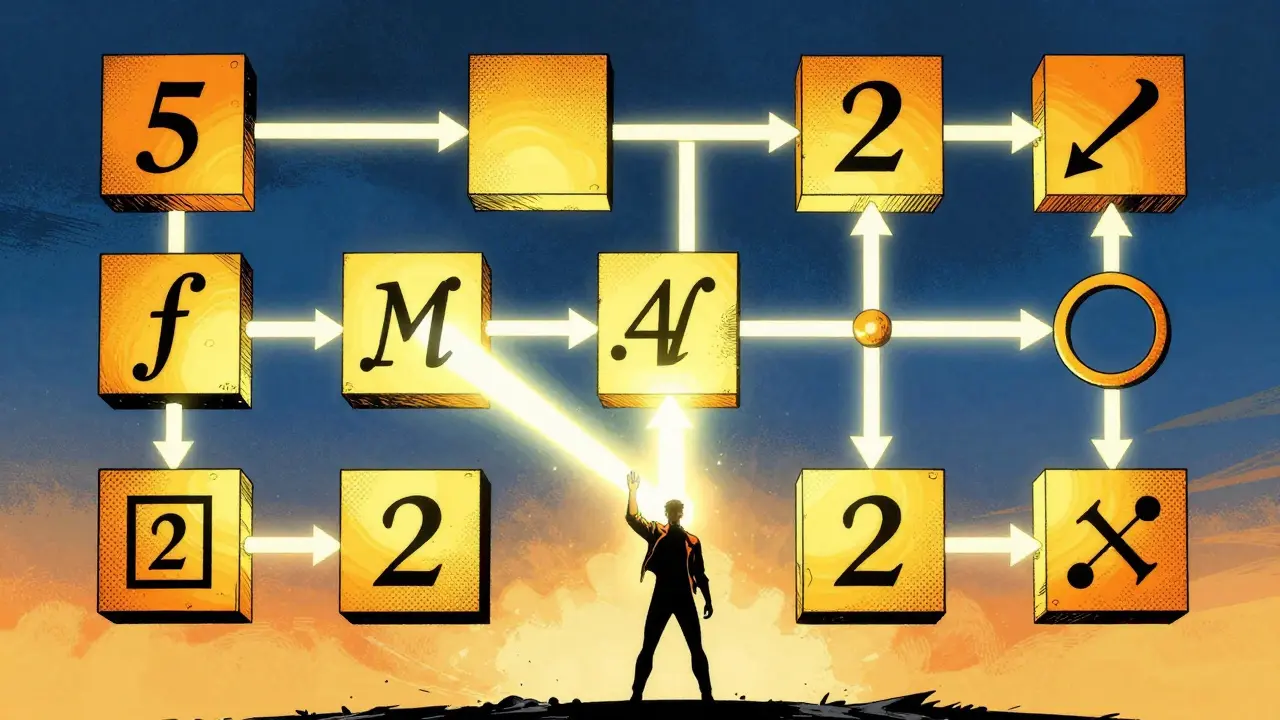

Combining Few-Shot with Chain-of-Thought

If your task involves complex reasoning, such as math problems or logical deduction, standard few-shot prompting might still fall short. This is where you should combine it with Chain-of-Thought Prompting. Instead of just showing the input and the final answer, show the explicit reasoning steps in between.

For example, if you are asking the model to calculate a discount, don't just show "Price: $100, Discount: 10%, Final: $90." Show "Price: $100, Discount: 10%, Calculation: 100 * 0.1 = 10, Final: 100 - 10 = $90." By demonstrating the logical progression, you guide the model to think through the problem step-by-step. This combination excels at tasks where the path to the answer is as important as the answer itself.

| Strategy | Best Use Case | Data Requirement | Cost |

|---|---|---|---|

| Zero-Shot | Simple tasks aligned with training data | None | Lowest |

| Few-Shot | Specific formatting, moderate complexity | 2-8 examples | Low |

| Fine-Tuning | High-volume single tasks, max accuracy | Hundreds to thousands | High |

| RAG | Dynamic information, large knowledge bases | External database | Medium |

Avoiding the Over-Prompting Trap

You might wonder why adding more examples hurts performance. The issue lies in attention mechanisms. Large Language Models allocate attention to different parts of the prompt. When you add too many examples, especially if they are noisy or less relevant, the model might focus on the wrong patterns. Researchers have found that using selection methods like TF-IDF (Term Frequency-Inverse Document Frequency) outperforms random sampling. TF-IDF helps filter relevant few-shot examples by identifying terms that are unique and significant to the query.

Another approach is stratification. Ensure your examples represent small classes adequately. If you are classifying functional vs. non-functional requirements, make sure your few-shot examples balance both types. This prevents the model from biasing toward the majority class. Testing across different models is crucial here. GPT-4o, DeepSeek-V3, and LLaMA-3.1 may peak at different numbers of examples. Empirical testing with your specific use case is the only way to find the sweet spot.

When to Choose Few-Shot Over Fine-Tuning

Few-shot prompting bridges the gap between the limitations of zero-shot methods and the high cost of fine-tuned models. You should choose few-shot prompting when you have limited annotated data, need rapid iteration, or require task-specific formatting. It avoids the need for extensive training infrastructure. However, if you have hundreds of labeled examples and need maximum accuracy for a high-volume task, fine-tuning might be more efficient in the long run. Similarly, if your task requires up-to-date external information, Retrieval-Augmented Generation (RAG) is the better choice. Few-shot is ideal for leveraging existing model capabilities without parameter modification.

Practical Steps to Implement Few-Shot Prompting

Ready to try it? Here is a simple workflow to get started:

- Define the Task: Clearly state what you want the model to do.

- Gather Examples: Collect 3-5 high-quality input-output pairs from your domain.

- Structure the Prompt: Place the examples before the actual query. Use clear separators like "---" or "Example:" to distinguish them.

- Add Instructions: Include a brief instruction after the examples, such as "Now, perform the same task for the following input:"

- Test and Iterate: Run the prompt on a range of new inputs. Check for consistency and accuracy.

- Refine: Adjust the examples based on errors. Remove confusing examples and add ones that address edge cases.

By following these steps, you can harness the power of in-context learning to achieve professional-grade results from off-the-shelf models. Remember, the goal is not to overwhelm the model with data, but to guide it with clarity.

How many examples should I use in few-shot prompting?

Start with 3-5 diverse examples. Research shows that performance often peaks with a small number of well-chosen examples. Adding too many can lead to over-prompting and reduced accuracy. Test incrementally to find the optimal number for your specific task and model.

What is the difference between few-shot prompting and fine-tuning?

Few-shot prompting uses examples within the prompt to guide the model's immediate response without changing its underlying parameters. Fine-tuning involves retraining the model on a dataset to permanently adjust its weights. Few-shot is faster and cheaper, while fine-tuning offers higher accuracy for specialized, high-volume tasks.

Can I use few-shot prompting with any LLM?

Yes, most modern large language models like GPT-4, Claude, and LLaMA support in-context learning via few-shot prompting. However, the optimal number of examples and the model's sensitivity to over-prompting may vary between architectures.

What is the "Few-Shot Dilemma"?

The Few-Shot Dilemma refers to the phenomenon where adding too many examples to a prompt causes model performance to decline. This happens because excessive or noisy examples can confuse the model's attention mechanisms, leading it to focus on irrelevant patterns.

Should I order my examples in a specific way?

Yes, ordering matters. Arranging examples from simple to complex helps the model understand basic patterns before handling edge cases. This structure improves generalization and reduces the likelihood of the model getting stuck on overly complex initial examples.