Have you ever asked an AI to write a short summary and received a three-page essay instead? Or requested a list of five items and got a paragraph of text? It’s frustrating. You’re not talking to a broken machine; you’re talking to a base model that hasn’t been taught how to listen.

This is where Instruction Tuning comes in. It is the process of teaching pre-trained Large Language Models (LLMs) to follow explicit directions rather than just predicting the next word. Think of it as the difference between a raw dictionary and a helpful librarian. The dictionary has all the words, but the librarian knows how to answer your specific questions. In 2026, instruction tuning isn't just a nice-to-have feature; it's the standard for building usable, reliable AI assistants.

The Core Problem: Why Base Models Fail at Tasks

Most people assume that because an LLM can write poetry or code, it can do anything you ask. That’s a common misconception. Base models are trained on massive amounts of internet text using "next-token prediction." Their goal is simply to continue the pattern they see. They don’t inherently understand commands like "Summarize this" or "List these steps."

When you give a base model a complex prompt, it often ignores constraints. It might hallucinate facts, ignore formatting rules, or drift off-topic. This happens because there is a significant semantic gap between user intent and model response. Research from Stanford University indicates that without instruction tuning, this gap can cause up to 40-60% of interactions to feel unintuitive or unhelpful for non-technical users.

Instruction tuning bridges this gap. It transforms the model from a passive text generator into an active problem solver. By training on pairs of instructions and correct outputs, the model learns to map natural language commands to specific actions. This doesn’t change what the model *knows*; it changes how it *behaves*.

How Instruction Tuning Works: The Three Phases

You don’t need a supercomputer to understand the mechanics, but you do need to respect the process. Instruction tuning follows a structured workflow with three distinct phases:

- Data Collection: This is the foundation. You curate high-quality instruction-output pairs. Each entry consists of a clear natural language instruction (e.g., "Extract the date from this email") and its corresponding accurate output. Quality matters more than quantity here. A dataset of 1,000 carefully curated examples often outperforms a noisy dataset of 50,000.

- Model Fine-Tuning: The pre-trained LLM undergoes supervised learning on these datasets. The model adjusts its internal parameters to better align instructions with appropriate responses. This is where the "listening" skill is built.

- Evaluation: You test the model against validation sets to assess its instruction-following capabilities. If it fails to follow specific constraints, you iterate. This phase ensures the model generalizes well to new, unseen instructions.

A critical insight here is that instruction tuning emphasizes task generalization. Unlike traditional fine-tuning, which specializes a model for one narrow domain (like legal document review), instruction tuning teaches the model to handle diverse, potentially novel tasks. This makes it adaptable to open-ended prompts, which is exactly what users expect from a chatbot or assistant.

Efficiency Breakthroughs: Making Tuning Accessible

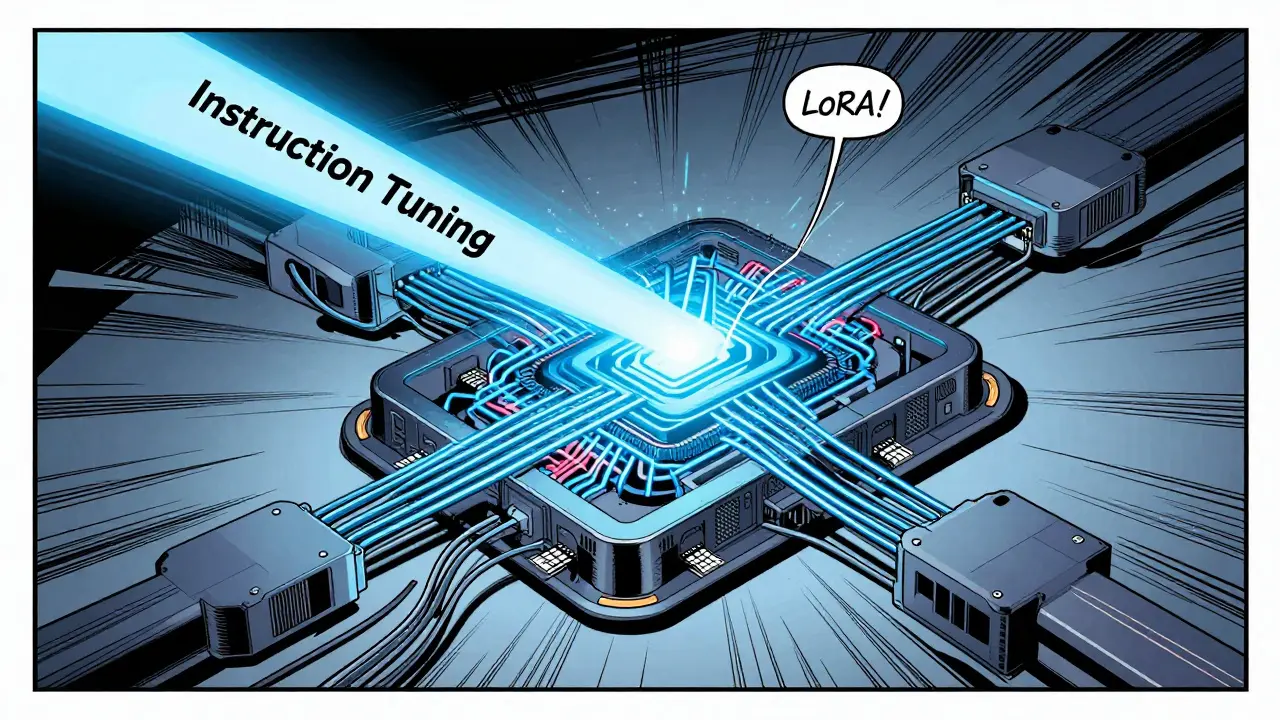

In the early days of LLMs, fine-tuning required massive clusters of GPUs and weeks of compute time. That barrier is gone. Today, efficiency strategies have democratized instruction tuning, making it accessible to smaller teams and even individual developers.

The biggest game-changer is Low Rank Adaptation (LoRA). Instead of updating every parameter in a model-which requires 80+ GB of GPU memory-LoRA freezes the base model and trains small adapter modules. These adapters add only 0.1-1% additional parameters while maintaining nearly identical performance. With LoRA, you can perform instruction tuning on a single high-end consumer GPU with just 24-32 GB of memory.

Other efficiency techniques include:

- Data Filtering: Algorithms select the highest-quality examples from larger datasets, reducing noise and training time.

- Response Rewriting: Techniques like Self-Distillation Fine-Tuning (SDFT) rewrite training responses to better align with the model’s pre-trained distribution, reducing catastrophic forgetting by approximately 37%.

- Automated Data Generation: LLMs generate additional instruction-output pairs from human-written seed examples, cutting dataset creation costs by over 60%.

These advancements mean you no longer need a PhD in distributed computing to build a custom AI assistant. The learning curve for implementing instruction tuning is now moderate, typically taking 2-3 weeks for engineers familiar with standard ML practices.

Instruction Tuning vs. Multi-Task Fine-Tuning

If you’re deciding how to optimize your model, you need to distinguish between instruction tuning and multi-task fine-tuning. They sound similar, but their outcomes are vastly different.

| Feature | Instruction Tuning | Multi-Task Fine-Tuning |

|---|---|---|

| Primary Goal | Generalization across diverse tasks | Specialization in predefined tasks |

| Input Format | Natural language instructions | Task-specific datasets (e.g., labels, categories) |

| Flexibility | High (handles novel prompts) | Low (fails on unfamiliar instructions) |

| Accuracy Trade-off | 85-90% across 50+ tasks | 95%+ on 5-10 specialized tasks |

| Use Case | Chatbots, assistants, creative tools | Sentiment analysis, NER, classification |

Instruction-tuned models excel at adaptability. They prioritize understanding user intent in open-ended scenarios. Multi-task fine-tuned models, on the other hand, are specialists. They achieve higher accuracy on specific, narrow tasks but lack the flexibility to handle unexpected requests. For most consumer-facing applications, instruction tuning is the superior choice. In 2025, 78% of enterprise LLM deployments incorporated instruction tuning, compared to just 32% using pure multi-task approaches.

Real-World Impact: Benefits and Limitations

Why does this matter for your business or project? The benefits are measurable. Instruction-tuned models show a 35-50% improvement in adhering to formatting requirements. Users report 41% fewer instances of models ignoring explicit constraints like "respond in bullet points." Hallucination rates drop by approximately 28% on average, with factual question-answering seeing improvements up to 45%.

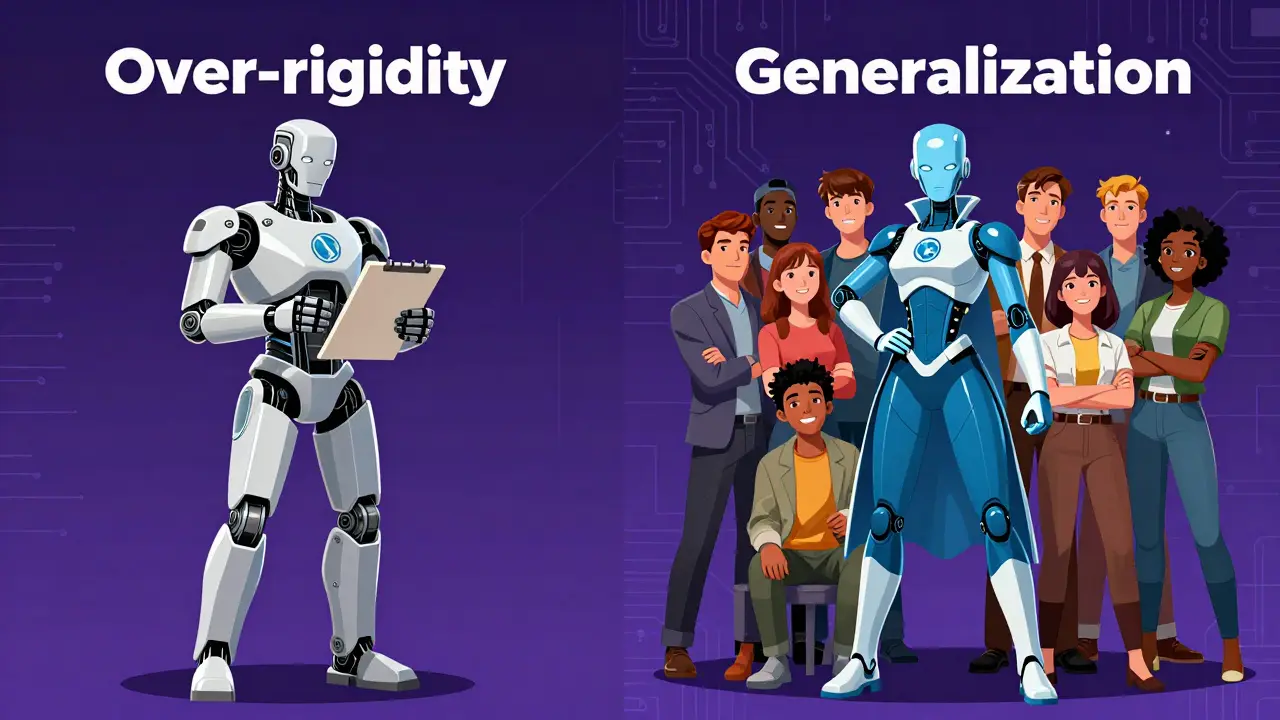

However, there are trade-offs. Professor Michael Collins of MIT notes that instruction tuning can lead to over-generalization. Models may apply instruction-following patterns too rigidly, stifling creativity when deviation is preferable. Additionally, instruction-tuned models typically require 15-25% more inference time than base models due to the cognitive steps involved in interpreting instructions.

User feedback reflects this balance. Enterprise applications see 32% higher satisfaction scores with instruction-tuned models. Yet, 18% of negative feedback centers on "over-rigidity," where models prioritize literal instruction following over helpfulness. For example, if you ask a model to "list the pros and cons" but also say "be concise," an over-tuned model might cut off important details to meet the length constraint, sacrificing utility for compliance.

Implementation Strategy: Getting Started in 2026

If you’re ready to implement instruction tuning, start with data quality. Aim for 5,000-50,000 high-quality instruction-output pairs. Use recent data filtering techniques to ensure your dataset matches real-world usage patterns. Avoid bias by diversifying your instruction types-include summarization, extraction, translation, and creative writing.

Choose your framework wisely. Hugging Face’s Transformers library remains the industry standard, offering robust documentation and community support. For efficient training, integrate LoRA adapters early in your pipeline. Monitor for catastrophic forgetting during evaluation; if the model loses general capabilities, apply SDFT techniques to reinforce pre-trained knowledge.

Finally, plan for iteration. Instruction tuning is not a one-time fix. As user needs evolve, so should your model. Incorporate automated data generation loops to continuously refine your instruction set. By 2027, dynamic instruction tuning-adapting to individual user preferences in real-time-will become standard. Start building the infrastructure for that future today.

What is the minimum dataset size needed for effective instruction tuning?

While traditional approaches suggest 5,000-50,000 examples, recent data filtering techniques show that carefully curated sets of just 1,000-2,000 high-quality instruction-output pairs can outperform larger, noisy datasets. Quality and diversity are more critical than sheer volume.

Can I perform instruction tuning on a single consumer GPU?

Yes, thanks to Low Rank Adaptation (LoRA). LoRA reduces GPU memory requirements from 80+ GB to just 24-32 GB, making instruction tuning feasible on single high-end consumer GPUs. This allows developers to experiment without expensive cloud infrastructure.

Does instruction tuning reduce hallucinations in LLMs?

Yes. Studies indicate that instruction-tuned models reduce hallucination rates by approximately 28% on average across benchmark tests. Improvements are particularly significant in factual question-answering scenarios, where hallucination reduction can reach up to 45%.

What is the main risk of over-using instruction tuning?

The primary risk is over-rigidity. Models may prioritize following instructions literally at the expense of providing helpful information. This can lead to a loss of creativity or nuance, especially in contexts where flexible interpretation is preferred over strict adherence to constraints.

How does instruction tuning differ from standard fine-tuning?

Standard fine-tuning optimizes a model for specific, predefined tasks using labeled data. Instruction tuning teaches the model to follow natural language instructions across diverse, potentially novel tasks. It prioritizes generalization and user intent alignment over task-specific specialization.