When your company handles sensitive customer data-bank records, medical histories, internal emails, or proprietary code-sending that data to a third-party AI service like ChatGPT isn’t just risky. It’s often against the law. Regulations like GDPR and CCPA don’t just ask you to be careful. They require you to control where data goes. That’s where open-source large language models (LLMs) become more than a technical choice-they become a compliance necessity.

Why Data Privacy Demands On-Premise AI

Closed-source LLMs like GPT-4 or Claude run on servers owned by companies you’ve never met. You type in a question, your data travels over the internet, gets processed, and comes back. Sounds simple. Until you realize that every query you send could be logged, stored, or even used to train future models. There’s no way to know for sure what happens inside those black boxes. Open-source LLMs flip that model entirely. You download the model-code, weights, everything-and run it on your own servers. No data leaves your network. That’s the core advantage. If you’re in finance, healthcare, or government, this isn’t optional. It’s the only way to meet legal requirements for data sovereignty and confidentiality.When Open-Source LLMs Are the Right Choice

You don’t need an open-source LLM for every task. But you absolutely need one when:- Processing personally identifiable information (PII) like names, addresses, or Social Security numbers

- Analyzing financial reports, internal audits, or client contracts

- Working with intellectual property-patents, product designs, source code

- Handling data governed by strict regulations like GLBA, HIPAA, or GDPR

- Building internal tools where employees need to ask questions without exposing sensitive inputs

Performance Trade-Offs? Yes. But They’re Shrinking Fast

Let’s be honest: open-source models aren’t always as smart as the latest GPT-4. In complex reasoning tasks-like interpreting legal jargon or solving multi-step financial problems-they can lag by 15-20%. That’s a real gap. But here’s what most people miss: that gap is closing fast. In 2023, Llama2 scored 82% accuracy on COBOL code analysis compared to human experts. By late 2024, Llama3 hit 89%. That’s not far off from GPT-4’s 93%. And the trend isn’t slowing. Every new release-Mistral 7B, Qwen, Phi-3-narrowed the performance gap by 5-7% quarter over quarter. For most internal business tasks-drafting emails, summarizing reports, tagging documents, answering HR questions-the performance difference doesn’t matter. What matters is that your data stays put.

Key Open-Source Models for Privacy-Centric Use Cases

Not all open-source LLMs are built the same. Here are the top three used by enterprises focused on data privacy in 2026:- Llama3 (Meta, released October 2023): Improved filtering reduces PII leakage by 37% compared to Llama2. Ideal for internal documentation and compliance tasks.

- Mistral 7B (Mistral AI, released September 2023): Lightweight, runs on a single GPU with 16GB VRAM. Perfect for small teams or edge deployments.

- Qwen (Alibaba, 2024 update): Strong multilingual support and built-in data masking. Popular in global enterprises with mixed-language compliance needs.

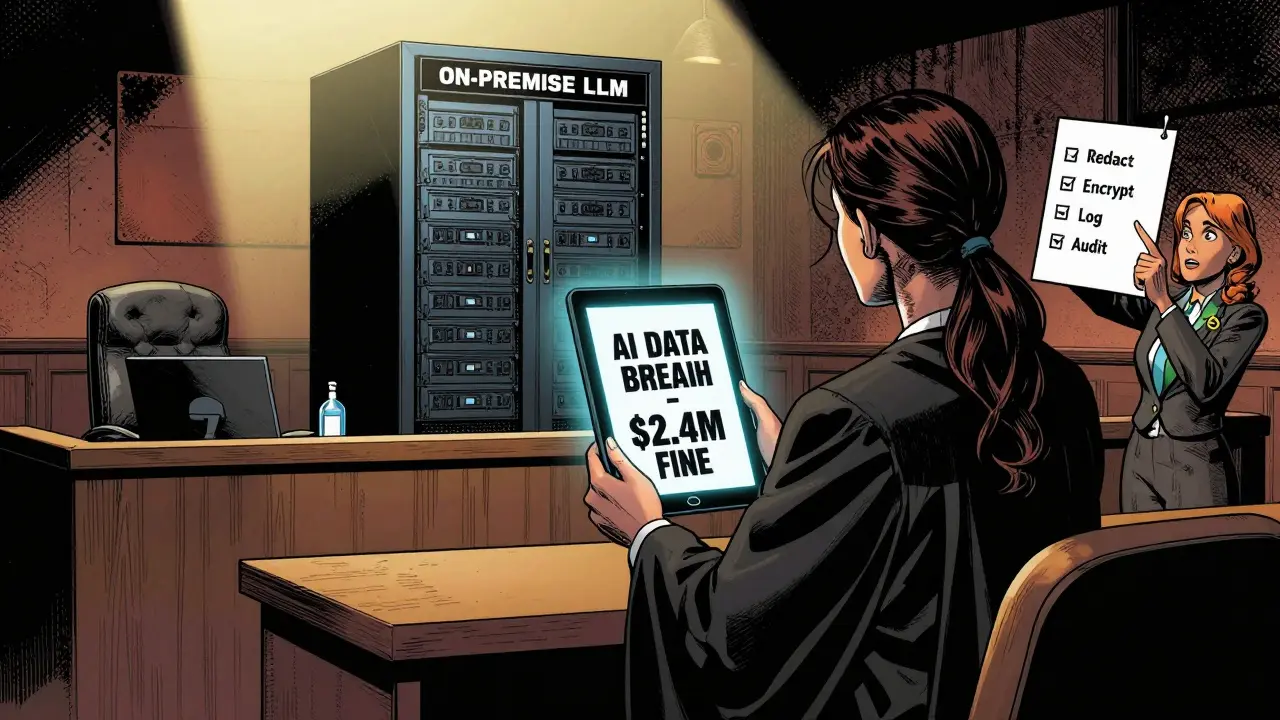

How to Deploy Securely: 4 Critical Steps

Deploying an open-source LLM isn’t just downloading a file. It’s a security project. Here’s what works:- Redact before input: Use tools like the Hugging Face Privacy API or custom token filters to strip PII from every prompt. 31% of early deployments failed because they skipped this step.

- Run in a secure environment: Use confidential computing (Intel SGX, AMD SEV) to keep data encrypted even while the model processes it. This is standard in U.S. federal agencies.

- Monitor outputs: AI models can hallucinate or repeat sensitive data they’ve seen. Set up anomaly detection to flag responses that echo internal documents or credentials.

- Log everything: GDPR requires traceability. Every prompt, every response, every model version-log it. 73% of enterprises now add AI logs to their compliance audit trails.

When Not to Use Open-Source LLMs

There are times when a closed-source model still makes sense:- Public-facing chatbots where users aren’t sharing sensitive data

- Marketing content generation (blog posts, social media captions)

- Research tasks where speed and creativity matter more than control

- Teams without the budget or staff to manage infrastructure

Regulations Are Pushing Adoption

It’s not just about security. It’s about compliance. In 2023, 42% of financial institutions in the U.S. started evaluating open-source LLMs specifically because of GDPR and CCPA requirements. By Q3 2023, 28% had moved into production. That’s not a trend. That’s a shift. Governments are leading the charge. The U.S. Digital Government Hub reported a 65% increase in open-source LLM adoption across federal agencies between January and September 2023. Why? Because they can’t outsource citizen data. Period. Even the EU’s AI Act, which takes full effect in 2026, will require transparency in training data and data retention policies. Open-source models give you that. Closed-source models? You’re trusting someone else’s audit report.What You Need to Get Started

You don’t need a team of AI PhDs. But you do need:- A server with at least 16GB RAM for Mistral 7B, or 80GB VRAM for Llama3-70B

- Basic Linux and Docker skills

- At least 2-3 weeks for smaller models, 8-12 weeks for larger ones

- A data privacy officer or compliance lead to sign off

The Future Is On-Premise

Gartner predicts open-source and closed-source LLMs will be functionally equal for standard business tasks by late 2025. That’s not a guess. It’s based on the pace of improvement. Every month, new open-source models get faster, smarter, and more secure. The real question isn’t whether open-source LLMs are good enough. It’s whether you can afford not to use them. If your data matters-if your customers trust you with it-then running AI on someone else’s server isn’t convenience. It’s negligence.Are open-source LLMs really more private than ChatGPT?

Yes, because you control where the model runs. With ChatGPT, your data goes to OpenAI’s servers and may be used to improve their models. With open-source LLMs like Llama3 or Mistral, you run everything inside your own network. No data leaves your firewall. That’s the only way to guarantee privacy under GDPR, CCPA, or HIPAA.

Can I run an open-source LLM on my laptop?

You can run smaller models like Mistral 7B on a laptop with 16GB RAM and a decent GPU. But for anything larger-like Llama3-70B-you need enterprise hardware with 80GB+ VRAM. Most businesses use dedicated servers or cloud instances with GPUs, even when keeping data on-premise.

Do I need AI experts to deploy these models?

You don’t need PhDs, but you do need someone who understands Linux, Docker, and basic ML deployment. Many teams use Hugging Face’s tools and pre-built containers to simplify setup. The bigger challenge is often compliance: making sure your data filtering and logging meet legal standards.

What’s the biggest mistake companies make with open-source LLMs?

Skipping data redaction. Many teams assume the model will ignore sensitive info-but it won’t. If you feed it a customer’s Social Security number, it might remember it. Always use automated filters to strip PII before input. That’s step one in every successful deployment.

Is open-source LLM adoption growing?

Yes, rapidly. In 2023, 42% of financial institutions in the U.S. were evaluating open-source LLMs for data privacy. By late 2024, nearly a third had moved to production. Government agencies saw a 65% increase in adoption in just nine months. The trend is clear: control over data is becoming non-negotiable.

Ian Maggs

January 22, 2026 AT 02:33It’s fascinating, isn’t it?-the notion that we’ve outsourced not just computation, but cognitive sovereignty; that we’ve traded the illusion of convenience for the illusion of safety… and now, with open-source models, we’re being forced-by law, by ethics, by sheer necessity-to reclaim agency over our own thoughts, our own data, our own digital souls…

Jen Becker

January 23, 2026 AT 07:45Yeah but who’s gonna actually do the work?

John Fox

January 23, 2026 AT 22:42My team tried Llama3 last month. Redacted everything. Logged everything. Still got flagged by compliance. Just saying.

Chuck Doland

January 25, 2026 AT 19:42While the technical merits of on-premise deployment are indisputable, the institutional inertia surrounding legacy cloud-based AI systems remains formidable. Organizations that have invested heavily in vendor lock-in-both financially and psychologically-often exhibit profound resistance to paradigmatic shifts, even when those shifts are legally mandated. The transition to open-source LLMs necessitates not merely infrastructure reconfiguration, but epistemological realignment: from trust in opaque corporate entities to trust in auditable, transparent, and locally governed systems. This is not a software upgrade; it is a cultural evolution.

Tony Smith

January 26, 2026 AT 14:47Let’s be real-Jen’s comment is the only honest one here. Nobody wants to do the work. But here’s the thing: if you’re handling HIPAA or GDPR data and you’re still using ChatGPT? You’re not lazy. You’re legally negligent. And if your compliance officer finds out? You won’t be fired. You’ll be sued. So yes, it’s a pain. Yes, it takes time. But compared to a $20M fine? This is the easy part. Start small. Summarize meeting notes. Prove it works. Then scale. And for the love of all that’s holy-use the Hugging Face Privacy API. Don’t be the guy who says ‘I didn’t know’.