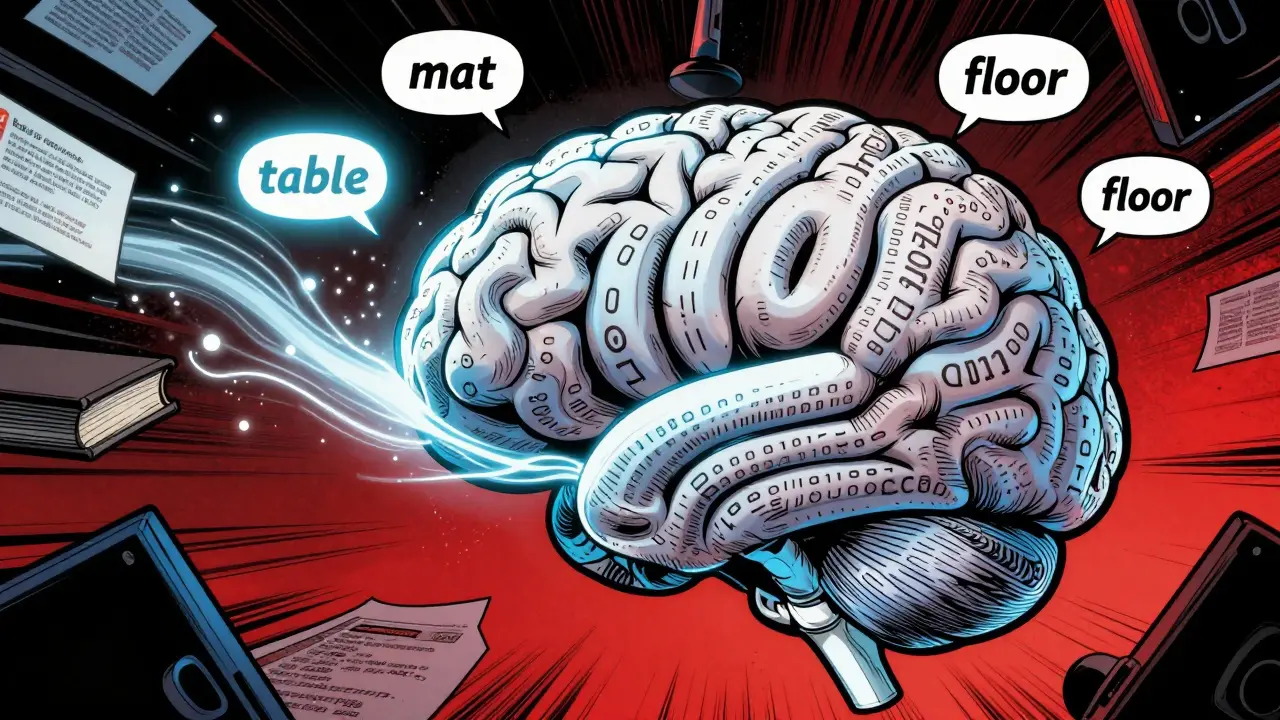

Chain-of-Thought Prompting for Better Reasoning in Large Language Models

Chain-of-thought prompting improves AI reasoning by making large language models explain their steps. It boosts accuracy on math, logic, and complex tasks without retraining. Learn how it works, where it shines, and where it fails.