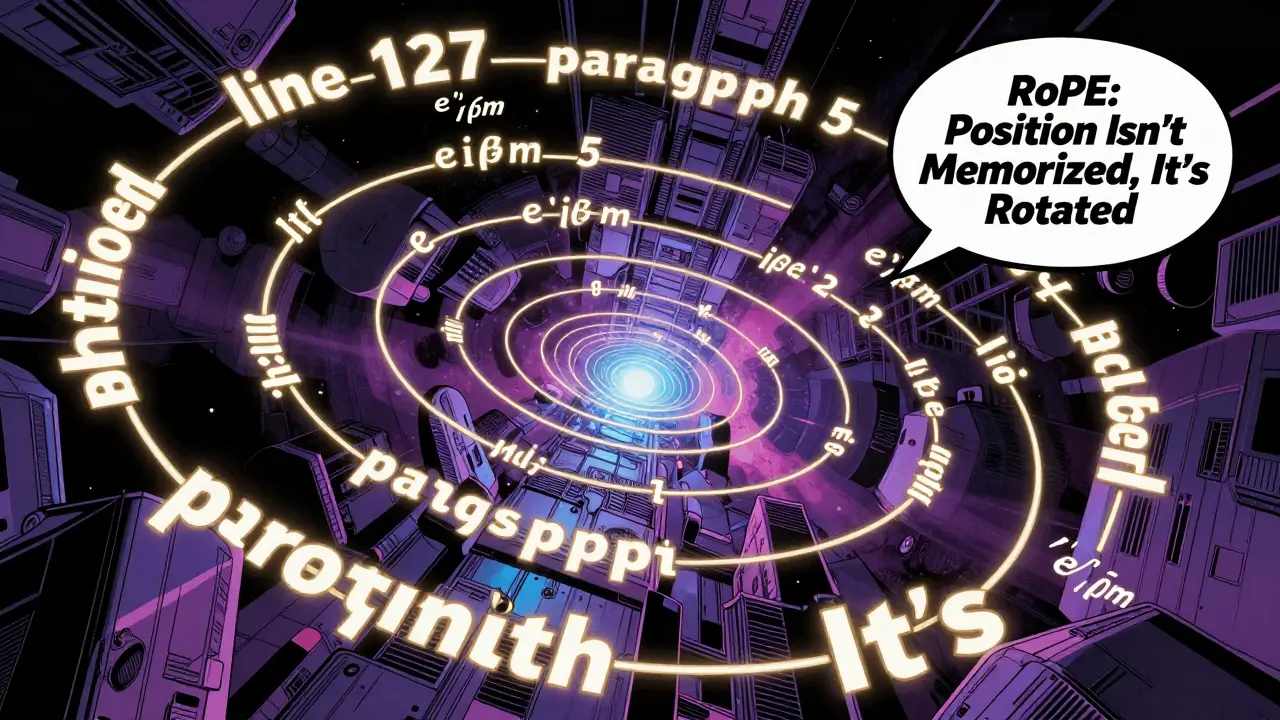

What Makes a Language Model 'Large': Beyond Parameter Counts and Into Capabilities

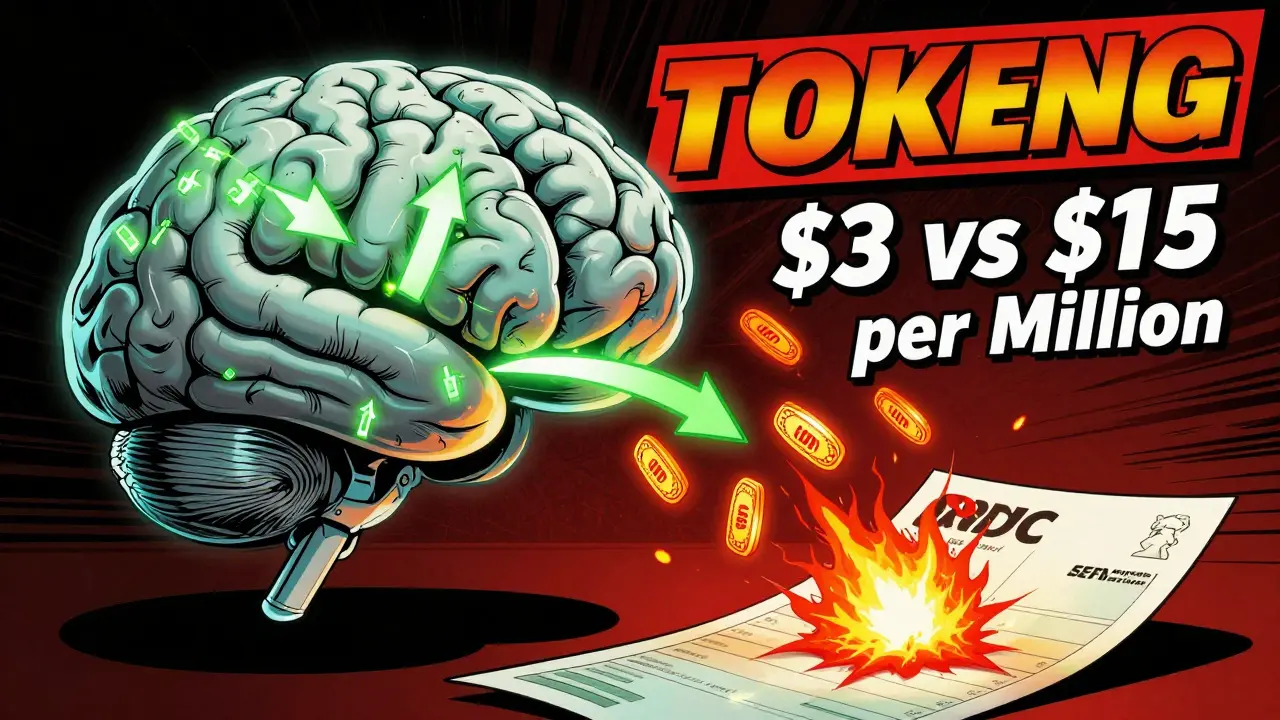

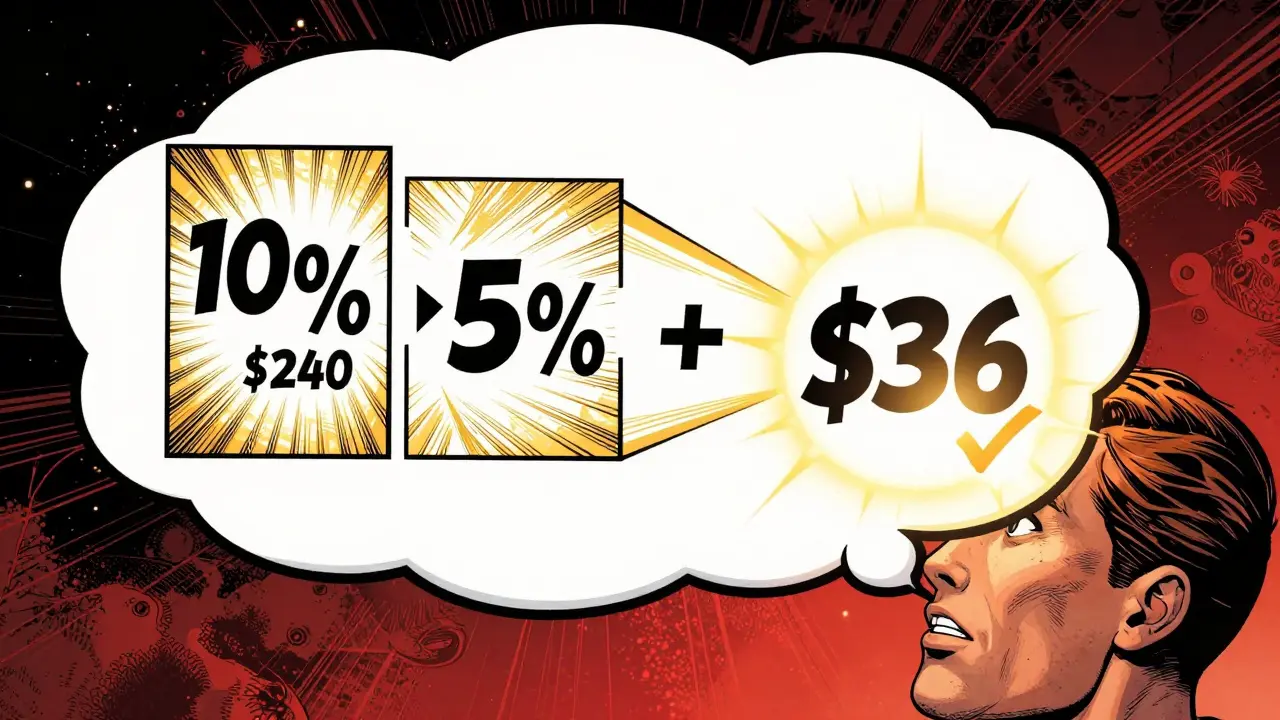

Explore why parameter counts are no longer the gold standard for AI. Learn about Virtual Logical Depth, emerging capabilities, and the real cost of scaling large language models.