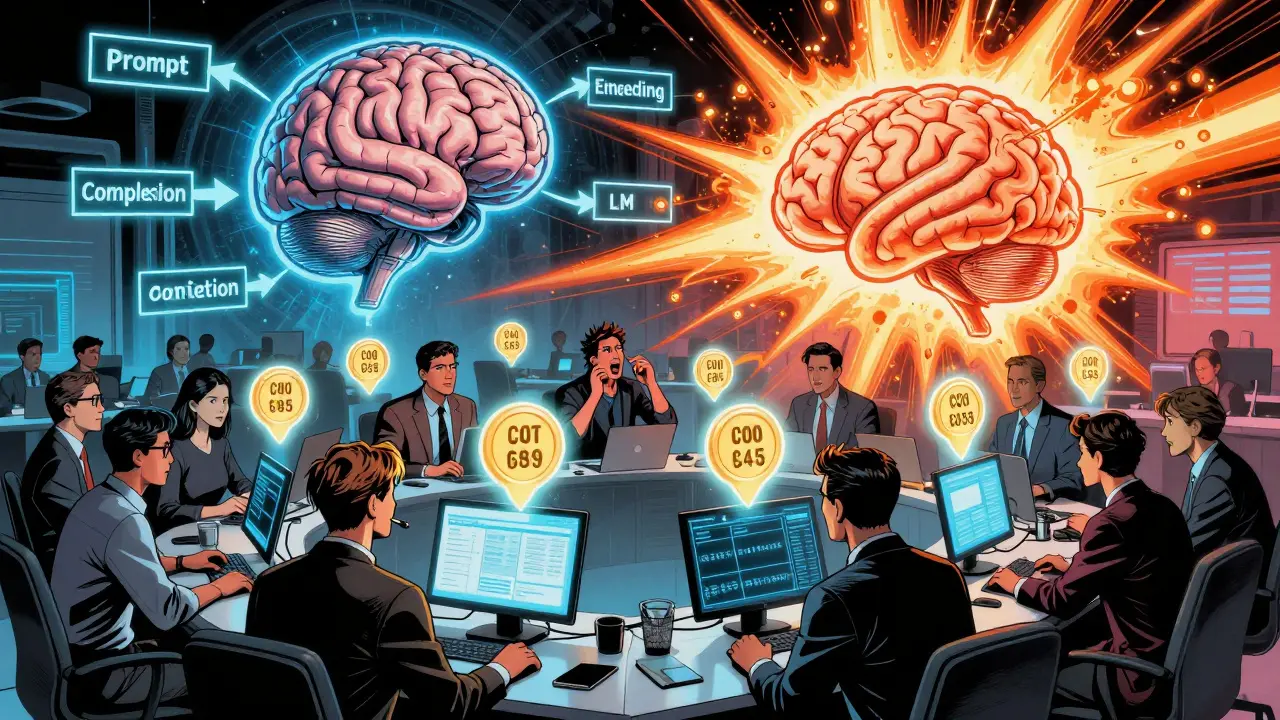

Understanding Positional Encodings in Transformer-Based Large Language Models

Positional encoding is the key technique that lets transformer-based LLMs understand word order. Without it, models can't tell the difference between 'The cat chased the dog' and 'The dog chased the cat.'